Why Game Development Stays on C++17

A veteran game developer with 15 years of industry experience explains why studios resist adopting C++20 and beyond, covering compilation overhead, Visual Studio lock-in, custom STL replacements, and the economic realities of game development.

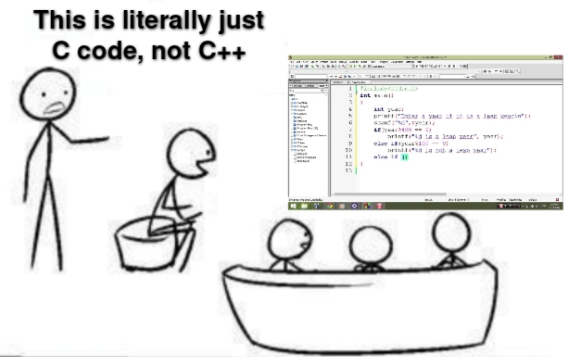

Over the last couple of years at conferences, I've been hearing more and more complaints from acquaintances in game development that the current direction of "modern C++" doesn't align with the needs of game development. The genuinely useful innovations essentially ended with C++17, and attempts to adopt C++20 often result in discovering numerous heisenbugs and a significant performance degradation — a critical 10-15% from build to build for us. Having wandered through various game studios — damn, it's going to be 15 years soon — I do have a thing or two to tell you.

All modern studios larger than two and a half workers, writing games in C++, C#, or something close — use Visual Studio or are migrating from their homegrown tools to Unreal/Unity, which is also C++, albeit with quirks. It historically happened that Windows and Microsoft were, are, and for the foreseeable horizon of about ten years will remain the largest market for PC-console games, and the consoles themselves have long become "basically PCs," but to avoid losing exclusives (and shekels), vendors will never admit this.

Mobile is kind of separate with its own Mac and Android issues, but in Visual Studio, in one form or another, 95% of games are created, debugged, and optimized — the rest is margin of error. Since the beginning of the golden age of game development (somewhere in the late 90s), most games were written with the assumption they'd be released on PC, and by PC I mean Windows. And the legacy of many AAA studios is tied to Microsoft in one way or another, even for non-Microsoft consoles and mobile.

Visual Studio and Debugging

Currently, Visual Studio is the best C++ debugger in the world, with an unparalleled ability to attach to, parse, and display practically anything you can think of, and if Studio isn't enough, you can open WinDbg, which even lets you program it. Debugging is essentially what many people use Studio for and are willing to put up with compiler quirks, weak optimization, buggy STL, and other issues.

Recently Microsoft added a time-travel debugger and generally provides a ton of capabilities for everything C++ related, if you're working on Windows, of course — from custom symbol servers to distributed builds and compiler scripts, all the way to the fact that PlayStation builds (a FreeBSD system, by the way) can't be built outside the Windows SDK. The SDK for Linux sort of exists, but it's not quite working, you have to dance with a tambourine like the old days, and there's no guarantee it'll work, and questions on the forum in the Linux thread sit unread by support for weeks. With Nintendo (a musl system) it's the same story — they always have fresh SDKs, but good luck building a Switch binary from Linux. How's that for trolling? And when you're used to having all of this at your fingertips, switching from skis to crutches and a hand saw (console debugging) instead of a "Friendship" chainsaw (Studio's debugger) is really not appealing.

Compilation Speed and C++20

C++17 was picked up by developers almost without problems, but the revolution of C++20 and later standards hasn't quite worked out. I really like the compilation speed and strict typing in C++11 and successors. Strict typing is undoubtedly a feature of the recent evolution of C++, when we saw a massive expansion of the traits system, things like nullptr and scoped enums to fight the legacy of pre-Columbian era code, but auto was thrown in as a bonus. It's cool, sure, but auto slows down compilation.

void vector<_TYPE_>::preallocate(const size_t count) {

if (count > m_capacity) { // 0.000032s

_TYPE_ * const data = static_cast<_TYPE_*>

(malloc(sizeof(_TYPE_) * count)); // 0.000087s

const size_t end = m_size; // 0.000023s

m_size = std::min(m_size, count); // 0.000342s

for (size_t i = 0; i < count; i++) { // 0.000038s

new (&data[i]) _TYPE_(std::move(m_data[i])); // 0.000135s

}And here's how auto increases compilation time nearly threefold for individual expressions with automatic type deduction. How much auto do you have in your project? I simply replaced types with auto:

void vector<_TYPE_>::preallocate(const size_t count) {

if (count > m_capacity) { // 0.000030s

auto data = static_cast<_TYPE_*>

(malloc(sizeof(_TYPE_) * count)); // 0.000195s <<<<<

const auto end = m_size; // 0.000062s <<<<<

m_size = std::min(m_size, count); // 0.000452s <<<<<

for (size_t i = 0; i < count; i++) { // 0.000042s

new (&data[i]) _TYPE_(std::move(m_data[i])); // 0.000258s <<<<<

}And this is where I really start disliking people who love the "almost-always-auto" style. Regardless of how well-justified the use of auto is, it's simply slower, and it also affects the compilation time of expressions where it participates, not for the better.

But with C++20 enabled, everything gets even worse. Those examples above were under C++14/17, where timing fluctuated within a 2-3% margin of error, and now look at this. Just out of nowhere, +10/15% on compiling the exact same code.

How to see compiler output for a specific code section (MSVC):

/Bt+— reports compilation time for the front and back parts of the compiler for each file. C1XX.dll is the front part of the compiler responsible for compiling source code into intermediate language (IL). Compilation time at this stage usually depends on preprocessor work (includes, templates, etc.). C2.dll is the back part of the compiler that generates object files (converts IL to machine code)./d1reportTime— reports the front part of the compiler's work time, available only in Visual Studio 2017 Community or newer./d2cgsummary— reports functions with "anomalous" compilation times. This is useful, try it.

void vector<_TYPE_>::preallocate(const size_t count) {

if (count > m_capacity) { // 0.000038s <<<<<

auto data = static_cast<_TYPE_*>

(malloc(sizeof(_TYPE_) * count)); // 0.000223s <<<<<

const auto end = m_size; // 0.000074s <<<<<

m_size = std::min(m_size, count); // 0.000509s <<<<<

for (size_t i = 0; i < count; i++) { // 0.000054s <<<<<

new (&data[i]) _TYPE_(std::move(m_data[i])); // 0.000302s <<<<<

}Top 3 (top-level only):

vector: 0.175865s

cstdio: 0.040779s

cstdint: 0.013023sTop 3 (top-level only):

vector: 0.250358s

cstdio: 0.057683s

cstdint: 0.018684sAs for strict typing, things aren't so bad there. It's certainly slower than plain types, but it has settled in nicely and is being actively adopted by most studios interested in improving C++ usage quality — if you're willing to sacrifice a bit of build speed, strict types are your friend.

Build Farms, Modules, and Economic Reality

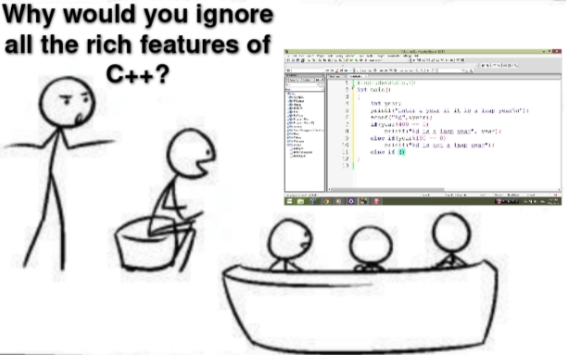

You can ignore compilation time if you work at a non-gaming company with good internal build farm infrastructure and potentially infinite computing power to compile any code you can write with your triple-layered templates. But large companies don't have it easy either — their ten-story templates, code generation, and reflection consume so much that compilation time starts turning into real dollars for hardware — hence modules. Yes, I might say something heretical here, but modules and all the hand-wringing around them were needed by big companies, and they pushed it into the standard.

In terms of game development, for individual studios and solo developers this is completely unnecessary. Indies simply won't have a build farm, and everything is built either on GitHub Actions, or the project has grown and there's something like IncrediBuild and a mini-farm. Those 15-20 minutes for a build get fixed by various hacks and the straight hands of a lead. Modules in their current state slow down or don't change the build time of a large project, if they even work at all, but they require new people for support. A game developer hears about modules either at conferences or from "module salespeople" interviewing for a mid-level position.

You can argue a bit about the relative cost of compilation time and equipment versus programmer time, and I can say that equipment is the cheapest resource you can buy with money, but... there's always another BUT.

But equipment is real money that has to be requested from management, while the cost of hiring, development, and everything else gets spread over some later period. And for many seasoned accounting ladies, it's hard to make this choice, because you have to account for dead raccoons here and now, while code, DevOps, and the game — they come later, in a month, or maybe at the end of the tax period. And company leadership almost always sees only the optimization of current profit. So when you come to management and ask for money so the game compiles slower, they look at you with a smile.

Tools and Custom STL

The next expense item after hardware is tools — you need lots of them, as many tools as possible: warnings, static analysis, sanitizers, dynamic analysis tools, profilers, etc. Everything on the market from Cppcheck to PVS-Studio and SonarCube gets used where possible, because a bug found at compilation or testing stage is saved time you can spend on development. But here's the problem — all these tools work well on non-Microsoft platforms, Linuxes and Macs — as you can understand, this is very atypical for game development.

What do tools have to do with modern C++? One way or another, all these tools work well with standard and more-or-less modern C++, they get updated for new features and language capabilities — otherwise nobody would buy them — while old standards are either just supported so they don't break, or simply ignored. So when you come asking for money for new tools that should support new features that aren't being used, they look at you with a smile.

Okay, open-source tools can still be groomed and made to work on Windows, and you can negotiate porting with the paid ones, but most of these tools are oriented toward standard C++, and... ours isn't standard. They have ready support for std::vector, but I don't use it because I have my own static_vector/double_ended_vector/small_vector/buffered_vector/hybrid_vector/onstack_vector with blackjack and ladies, a custom allocator and equally custom iterators, and these tools can't check it. You can't blame them — they're also made to earn money, and enterprise pays vastly more than all AAA studios combined.

CI and Testing

And then there's setting up and maintaining a CI pipeline that runs these tools, which requires build engineers, and that can be problematic because hiring people for non-game engineering positions is a systemic pain of the entire industry. I can push my lead to take a couple of mids to help me, but he can't push his boss to add another full QA to our department at half a mid's salary, let alone a DevOps engineer.

So it turns out that most standard tools simply don't do what's expected of them. And if they don't do what they were created for, can they be trusted? This distrust has historically lived in game dev — first we didn't trust frameworks and libraries, and before us our predecessors didn't trust orthodox C, so they wrote in assembly, but thankfully those times have passed. Somewhere around the late 90s, MSVC6 came out and people gradually started trusting C compilers, but C++ was still a sketchy product, so code was written in C, in a C++ style, sometimes even with multi-line comments.

By 2000, the game industry had figured out C++. It was the golden age of design patterns, first engines, and big research in game dev. Everyone fancied themselves Carmack or Sweeney and brought goodness in the form of custom STLs, since on consoles STL simply didn't exist, and everyone carried what they could. And consoles back then were the main priority and primary money-maker. In 2015, I still saw GCC 4.3.5 (which was roughly released around 2007) being used for certification builds at Nintendo — meaning along with your main build, you sent a binary that had to be compiled with the old GCC, and they went through certification together, and only a year later they switched to clang, if I remember correctly, 3.5. The key word is "had to," because many things either didn't compile on version 4, or compiled with quirks.

And in the late 2000s, two revolutions began simultaneously. Huge legacy codebases spilled onto GitHub, problems with class hierarchies led to the development of the component-oriented approach in games and engines, which continues to evolve today, leading to the emergence of Entity-Component-System (ECS), and who knows what it'll turn into next. But is there even a single tool that can check and test component and ECS systems in games, or anywhere else? So when you come to management asking for money for a new tool that's supposed to test something, they look at you with a smile.

The second revolution was the active consolidation of engines, which stopped being a balalaika that only a ten-armed Shiva in the form of the development team could play, and the community gained the ability to influence development. You understand that a million contributors is indeed a million contributors.

Platform Evolution and Memory Control

Against the backdrop of all these changes, the platforms themselves changed. Platforms became truly multitasking — symmetric and asymmetric. Developers accustomed to Intel had to adapt to custom hardware with heterogeneous CPUs (PS2/3), then to PowerPC (Xbox 360), then to even more heterogeneous architectures (PS3), then came the zoo of mobile devices where everyone's on their own. And with each new generation of platforms, the requirements for CPU performance, memory, and storage changed. If you wanted optimal code, you had to rewrite it again and again — sorry, but there's no time for standard changes here. And I haven't even mentioned the impact of the internet on games.

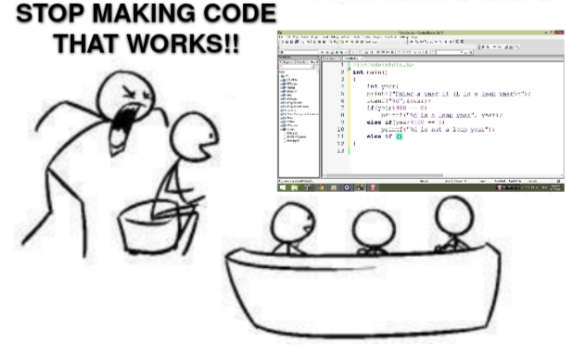

So historically, everyone had their own STL implementation. It's no secret that standard STL containers aren't well suited for games. If I had to choose between std::string and char[256], I'd choose the latter because it doesn't allocate memory. I'll somehow deal with bugs, but many still don't know what to do with memory allocation in std::vector.

Nearly all STL containers have problems with controlling memory allocation and initialization in one way or another, and in games it's important to limit memory for various tasks. Amortized O(1) time isn't good enough — right now it's conditionally 1ms, but three frames later it's 10ms, so on average it'll be 1ms, but the freeze already happened and players are unhappy with the incompetent programmers.

Memory allocation is generally one of the most expensive operations, and nobody wants to miss a freeze due to an unexpected allocation. The same applies to external dependencies — you always need to understand where CPU cycles go, where and when memory is used, why a stall occurred when accessing a resource. But our beloved VS used to change ABI like gloves with every update, and if you had many dependencies, updating the compiler would hang the studio for a couple of days, leading to rebuilding half the SDK and unexpected bugs in old library versions. That's why studios preferred small, easily integrable libraries, ideally built in plain C, that do one thing but do it well — even better if the library is open source with a free license and no mandatory attribution. So when you come asking for money for a library with closed sources and paid support, they look at you with a smile.

And add the C++ game developer syndrome (Not-Invented-Here). You know why game dev loves reinventing the wheel? Because the rakes lie in known places for years — just don't hit them too hard on the turns.

The Boost Story

Game development started with rockstars, with developers who worked on hardware that would come out in a year or two — they simply had no other option but to make their own, here and now. And game development is one of the few software industries where there are credits, and even a rank-and-file programmer will be listed in the game's credits, and the more of your ideas you implemented, the higher your name will be in that list, of course after the names of directors, producers, chief salespeople, their wives, children, and dogs. Writing code from scratch, from your head, until your brain cracks — that's not just fun, it often advances careers and increases the conditional raccoons at the end of the month!

Boost is also quite the bicycle, but it's not particularly loved here. There was one amusing case when an outside person was hired as a lead at a studio — a good, seasoned enterprise developer. He brought some of his own people and sold management on Boost to solve a specific problem. There was an upcoming milestone, nobody could stop him, and the stars aligned, as they say. Boost went straight into the main engine branch.

It worked, can't say everything was smooth, there were difficulties with updates, but nothing critical. A year later the person quit, but the library had already spread like an octopus throughout the entire project, and then the studio got acquired and it was time to merge into another main branch, but the presence of third-party libraries, including respected ones, turned out to be unacceptable. We initially sneaked in a few really simple headers, but that was it. We had to uproot Boost and write our own, stealing some individual parts, rewriting others from scratch. Later we repeatedly had to patch up the quasi-Boost code and derivatives, but taking the ready-made version or updating sources to new ones became increasingly problematic. Now the code was ours, and we had to maintain it ourselves. So Boost 0.9 remained in places, even though 1.2 and 1.3 were already on GitHub. So when you come asking for money for people to pull a new free open-source framework into the engine, everyone remembers Boost and looks at you with a smile.

But primarily, Boost didn't work out because it was too bulky, tried to do too much, and critically increased build time — 4x without it. And since the team we were merging into had already been through all of this, the answer was a categorical no. A year of integration, six months of weeding and bugs — no thanks, we don't need that kind of happiness even for free.

And although there are many successful projects that use it, those phantom pains from that failure haunt me to this day, and seeing a Boost folder in a project, I mentally prepare myself for half-hour builds and sheets of template logs about some forgotten pointer. Examples of successful STL and Boost usage in individual projects don't change anything — such is psychology.

Why Custom Libraries Persist

I'm not the only one like this. For the same reasons, many game studios develop their own libraries replacing STL and offering specialized solutions. But you have to avoid extremes here too. Looking for an alternative to std::map or a std::vector with small buffer support is perfectly reasonable, writing your own allocators and containers — who's going to stop you? — but writing your own analogues of std::algorithm or std::type_traits without practical benefit is a questionable decision.

It's a shame that STL is associated primarily with containers. They're usually taught first, so when people say "STL," std::vector comes to mind, when they should be thinking about std::find_if. That's what should really be taught in courses — the ability to use algorithms. Anyone can write a vector.

Testing (or Lack Thereof)

What's even worse than custom STLs is the virtual absence of automated testing. Why? Because nobody needs it, because correctness isn't that important, and there's no clear specification. "Good enough" is fine, as long as it's fun. That's the whole essence of game testing. When I first got into development, I was very quickly disabused of the notion that striving for realistic modeling was even worthwhile.

Games are always tricks, whispers behind the curtain, T-poses behind your back, simplifications, and deception. Nobody cares about simulation accuracy when you don't have a clear spec beyond "it should be awesome" — there's essentially nothing to test. To put it bluntly — games aren't like other areas of C++ where lack of correctness and crashes can threaten someone's safety or money. Well, maybe it does, but it's your own fault — you bought everything in the first week of sales, now wait for first-year patches.

Games have taught publishers that code doesn't live long. What matters is that the game doesn't lag too much at release — that's the vendor requirement. We still have to test it, but automation isn't mandatory. For management, testing is a waste of time and money that requires experienced engineers who could otherwise be writing code or designing levels, yet the results of their work are barely visible. Why write a test that a level hasn't broken after the hundred-thousandth launch?

That time is better spent creating new features, content, and music. In the short term, it's much cheaper to use hired QA units from India, which you can rent at a couple thousand heads for a C++ senior's monthly salary, and who will non-stop play builds for a day, a week, a month... So when you come asking for money for QA, they look at you with a smile.

Debugging Doesn't Scale

Besides the testing problem, there's another issue — debugging scalability, or rather non-scalability. It's like the joke about nine women and the theoretical possibility of getting a baby in one month.

The main problem with debugging is that it doesn't scale. You can't take ten programmers and fix a bug in T/10 time. In the ideal case you'll get T/1.5 with pair work; beyond that, the total real time to fix a bug only increases.

That's because a bug reaches a programmer as dumps or repro steps, and what do we do — we launch the debugger and set breakpoints. Sure, setting breakpoints can help find obvious problems like crashes, but what do we actually do with the real bugs? The ones that remain after we fix everything else? The ones that happen only under network load, during memory shortage, in data races, or with some small, not yet identified group of millions of players, or on damaged RAM sticks, in the Estonian build, at 3 AM on a Monday?

We do nothing, because we know nothing about them. That's where QA comes in — they maximally narrow the error area, saving programmer time by processing sufficiently large datasets without code knowledge, without source code, trying to isolate the problem, make it happen more often, reading logs and carefully analyzing. And then they send it to me, and what do I do — I launch the debugger or meditate over logs.

And I have to dive head-first into "modern C++," set breakpoints on specific data that interests me, but the debugger is the same as always. But C++ is new, bringing more and more optimizations, doing copy elision for temporaries, moving values through registers instead of the stack, even if I didn't ask for it.

This doesn't affect the ability to debug; it's just that the compiler less and less generates the code I expect to see. And post-17 standards increasingly change the code I wrote. Perhaps at some point, nothing of my code will remain, and there'll be yet another layer of bytecode (there were proposals in Clang to pull certain IR parts higher and do dynamic dispatching for different processor instruction sets) that adapts to the processor on the fly. So when you come asking for money and time for a new standard that costs more of your time debugging, they look at you with a smile.

Conclusion

Then you go back to your desk and keep using C++17. You don't need to adopt new features you don't like. Practically everything you do now will continue to be supported — I'm confident — for the next two console generations, which is 7-10 years. You'll still benefit from compiler improvements in the future. That's a normal strategy.

I know at least a dozen projects and engines, including fairly large ones like the one where I work now, that still use C++98 with a sprinkling of lambdas, tuples, and coroutines, ignoring everything else. And I must say — C++98 remains an excellent language for writing games, often winning in build speed and release performance. But at some point you'll have to face changes, because you'll need to hire other people. More and more often, they'll be C++ engineers who only know "modern C++." And one Monday, a generational developer shift will happen, just as it did with assembly, C, C++98, and C++11. You can't freeze functionality and postpone compiler updates forever. Or can you?

FAQ

What is this article about in one sentence?

This article explains the core idea in practical terms and focuses on what you can apply in real work.

Who is this article for?

It is written for engineers, technical leaders, and curious readers who want a clear, implementation-focused explanation.

What should I read next?

Use the related articles below to continue with closely connected topics and concrete examples.