We Built a World We No Longer Understand: Why NASA Can't Replicate Its Own Engine

We interact daily with technologies we don't truly understand — and that gap between familiarity and comprehension is more dangerous than it seems. From fake bomb detectors costing lives in Iraq to NASA being unable to recreate its own moon rocket engine, the cost of technological illiteracy is very real.

Right now, before you scroll any further, pause and picture a bicycle. The most ordinary two-wheeled one — the kind you've seen hundreds of times in your life. You may have even ridden one last summer.

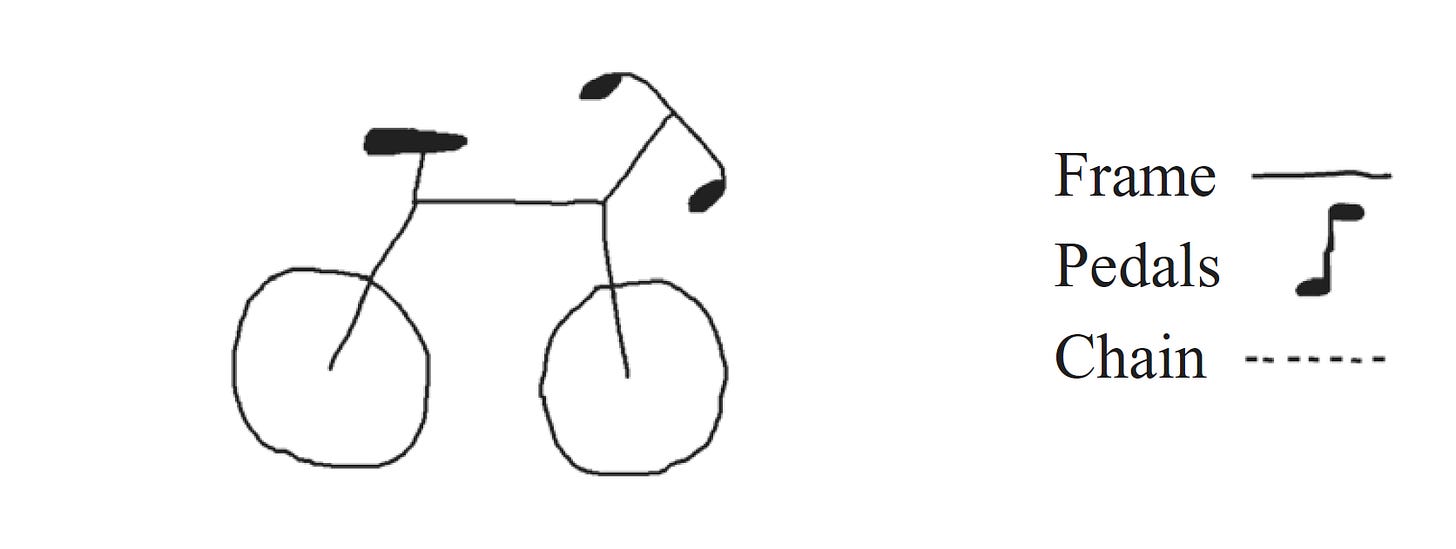

Now, a thought experiment. Try to sketch its mechanical diagram in your head — or better yet, on a piece of paper. How does the frame connect the front and rear wheels? How is the chain routed and where are the pedals?

If your bicycle looks like a surrealist contraption that will never move — welcome to the club. You've just encountered a phenomenon that cognitive psychologists call the "illusion of explanatory depth." The problem isn't that we draw badly. The problem is that we're convinced we know how things work, when in reality our understanding stops at the level of the user interface.

200 Subjects and One Impossible Bicycle

In 2006, English cognitive psychologist Rebecca Lawson of the University of Liverpool published a study with the telling title The Science of Cycology, in which she examined the question: "Do we really know how the everyday things we constantly see actually work?"

Lawson ran a series of experiments involving a total of 175 "ordinary people" (students and their parents), as well as a separate group of 68 expert cyclists. The task was simple: complete a schematic sketch of a bicycle — frame, pedals and chain. To rule out excuses like "I can't draw," participants in the second part of the experiment were asked to select the correct diagram from several options.

The results were disheartening. In the group told in advance that the test was specifically measuring functional understanding, 50% of subjects made errors when drawing the chain. The frame situation was even more interesting: while only 23% drew it incorrectly, 46% chose the wrong diagram in the multiple-choice test. Expert cyclists made fewer mistakes, but ordinary people consistently displayed incompetence while being confident in their knowledge beforehand.

The thing is, our brain is a device with limited computational resources, optimized for efficiency. We perceive the world through the lens of "affordances" — action possibilities. We know that if we push a pedal, the bicycle moves. The mechanism of torque transmission, chain tension, and frame geometry get folded by our brain into one convenient "black box."

As a rule, this isn't a serious problem. We design technology so it can be used without knowing what's inside. Good industrial and UX design makes devices intuitively understandable. But the danger lies not in ignorance itself, but in false confidence that we understand how everything around us works.

The Rabbit Hole of Everyday Life

With a bicycle you can figure things out by spending a few minutes examining the frame. With modern technology, that approach fails completely. How does plumbing work? How is an air conditioner built? What happens inside a microprocessor as you read these lines? You can fall into the mechanics of everyday objects like Alice tumbling into Wonderland.

For example, when you launch a maps app to find the nearest coffee shop, your smartphone relies on effects from both theories of relativity. GPS satellites orbit Earth at about 14,000 km/h. According to special relativity, their speed makes time run slower for them — by about 7 microseconds per day. On the other hand, the satellites are 20,000 km up, where Earth's gravity is weaker. According to general relativity, time runs faster in a weaker gravitational field — by about 45 microseconds per day.

The net result: the atomic clock on a satellite gains 38 microseconds every day. Seems trivial? But in navigation, time is distance. A 38-microsecond error would produce a positional error of about 11 kilometers per day. If engineers hadn't accounted for Einstein's equations, your GPS would show you in a neighboring city within a day. Does the average navigation user know this? No. They just need to turn right in 200 meters.

Or take music compression — MP3 or streaming on Spotify. It's built on psychoacoustics — an entire science at the intersection of biology, physics, and psychology. Compression algorithms cut from a track the sounds we won't hear against the background of other sounds. The effect of frequency masking means a loud sound at one frequency makes us "deaf" to quieter sounds at neighboring frequencies. We're not listening to the original music, but to a version optimized for the bugs of our own auditory system.

From Technology to Magic

Arthur C. Clarke, the science fiction writer and futurist, formulated a law that is more relevant than ever: "Any sufficiently advanced technology is indistinguishable from magic."

For most users, modern technology crossed that threshold long ago. When a smartphone recognizes a face, it's magic to the layperson. When a neural network writes a thesis, it's magic. When contextual advertising offers you something you were just thinking about, it's unsettling magic.

The danger is that when technology becomes magic, critical thinking switches off. If you don't understand the physics of the process, you can't assess the risks and you can't distinguish real technology from pseudoscientific imitation. You stop being a user of a tool and become a believer — and believers are easy to deceive.

Costly Ignorance

At the everyday level, basic critical thinking, some knowledge of physics, and the habit of thinking before acting are enough to guard against the dangers that come from not understanding how technology works. But things get far more interesting when this problem scales to the level of institutions.

Let's move to Iraq in the late 2000s. Its people were suffering from terrorist attacks; every car at a checkpoint was a potential car bomb. Soldiers and police were looking for any means to survive and protect civilians. And then a miracle device appeared on the scene: the ADE-651.

Outwardly it looked like a prop from a low-budget 1980s science fiction film. A black pistol-grip handle with a long metal antenna attached on a swivel. No batteries, no display — and a price tag starting at $8,000 per unit.

The manufacturer, British company ATSC led by James McCormick, claimed fantastic capabilities. According to the brochures, the ADE-651 could detect explosives, drugs, ivory, and human bodies at distances of up to one kilometer. Its operating principle was described using a collection of pseudoscientific terms: "electrostatic magnetic ion attraction."

When the device fell into the hands of independent experts from Cambridge, they expected to find at least some primitive electronics inside. But the handle was empty. The antenna was an ordinary telescoping rod that rotated freely on its swivel, responding to the micro-movements of the operator's hand.

Included with the device were swappable cards labeled "TNT," "C4," "Heroin" — supposedly "programmed" to the frequency of the target substance. Inside the cards, experts found anti-theft tags — the penny stickers you find on books in shops. In some versions there wasn't even that — just a piece of plastic. The handle had no reader anyway. Users were simply inserting one piece of plastic into another.

How could the device work without batteries? The manual claimed the ADE-651 was powered by "the operator's body static electricity." You needed to shuffle your feet on the ground to "charge up." Any first-year physics student should have laughed in the salesman's face — but generals were buying.

In practice, the illusion of functionality was created through ideomotor action — the same effect that makes a pendulum move in a psychic's hands. When an operator under stress sees a suspicious car, their muscles make microscopic, unconscious contractions. The antenna amplified those micro-movements and swiveled in the same direction, appearing to confirm suspicion. Classic dowsing, packaged in tactical military design. McCormick had simply bought a batch of cheap novelty "golf ball finders" for $20 each, relabeled them, and raised the price a thousandfold.

Iraq spent more than $85 million buying these magic wands. The ADE-651 became the primary screening tool at all entrances to Baghdad. The devices were also purchased by Afghanistan, Pakistan, Lebanon, Mexico, and Thailand.

How many people died because of the ADE-651? The exact number is unknown, but the count runs into the hundreds if not thousands. Trucks carrying explosives passed freely through checkpoints. And every time after a terrorist attack, officials would say something like: "The operator must have used the device incorrectly. We need more training." Nobody wanted to admit the emperor had no clothes.

James McCormick was arrested and convicted in 2013 and sentenced to 10 years in prison. But by the time of his conviction, ADE-651 units were still in use at some checkpoints in the Middle East.

This story shows what happens when the people making decisions lack the competence to distinguish science from magic. Apparently there was no technical consultant around to say: "Sir, this violates the laws of physics" — or if there was, they weren't being listened to.

The Illusion of Control

Many professionals, engineers, and scientists themselves don't fully understand how the technology they work with operates. Software developers know this better than anyone.

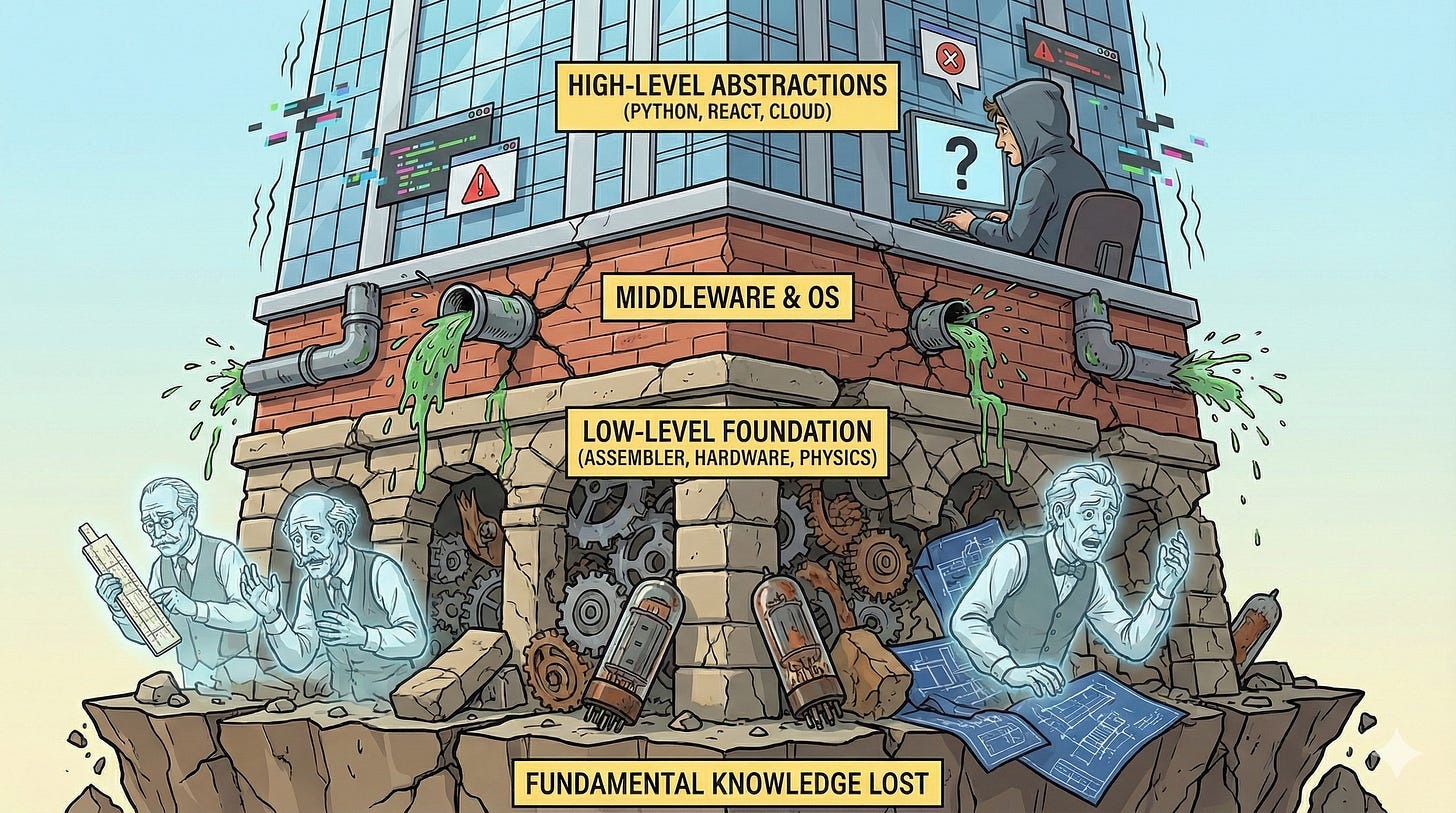

In building computers, humanity has constructed a tower of abstractions. At the very bottom is physics: electrons moving through semiconductors. Above that, micro-architecture: logic gates assembling into adders and registers. Then machine code and assembly: direct commands to the processor where the developer still controls every byte of memory. Above that, languages like C/C++, where the programmer still manages memory manually. Then high-level languages like Python, Java, JavaScript — at this level a garbage collector appears and the programmer no longer thinks about freeing memory. And finally — modern frameworks and no-code platforms, where programmers drag squares around in a visual editor.

The higher we climb this ladder, the less we understand what's happening in the basement. A modern junior developer writing React or using heavy Python libraries for data science often has no idea how their code translates into processor instructions. They don't need to — at least until something breaks.

Joel Spolsky, one of the creators of Stack Overflow, called this the "Law of Leaky Abstractions." Every non-trivial abstraction is, to some degree, "leaky." When you click a button in a pretty interface and the app freezes, it means the abstraction has leaked — somewhere five levels down, a file descriptor ran out or a hash collision occurred, the existence of which you never suspected.

This fundamental ignorance of the "lower floors" has direct consequences. Have you noticed that new software takes up more and more space and demands more and more resources, while its functionality barely grows? Apple M-series processors or top-of-the-line Intel Core i9s have performance that NASA engineers from the Apollo program would have considered divine. Yet a messenger like Slack can take 10 seconds to open and consume a gigabyte of RAM.

This is described by Wirth's Law: "Software is getting slower more rapidly than hardware becomes faster." Want to make a notes app? Instead of writing optimized native code, take the Electron framework. Effectively, each such app is a separate instance of the Chrome browser. This is monstrous from an engineering standpoint, but fast and cheap from a business perspective. We've created a development culture where understanding how things actually work is not valued. "Hardware will handle it."

The Archaeology of Code and Hardware

We're used to thinking of technological progress as a straight line pointing upward. We're confident that knowledge accumulates like interest in a bank account. If we invented something once, that knowledge stays with us forever, right?

Unfortunately, no. Technologies are not static artifacts stored in a library. They are living processes that die with the people who carry them. And the most striking proof of this is not in the lost secrets of Egyptian pyramid construction, but at NASA.

In the early 2010s, when NASA began seriously discussing a return of humans to the Moon, the agency needed a super-heavy launch vehicle. Nobody wanted to reinvent the wheel — the U.S. already had the most powerful single-chamber liquid-fuel rocket engine in history: the Rocketdyne F-1. This monster powered the first stage of the Saturn V rocket. Five of these engines burned 13 tons of kerosene and liquid oxygen per second. The F-1 sent people to the surface of the Moon and never failed once. And NASA had all the blueprints — thousands of kilograms of technical documentation.

Engineers reasoned: "We'll take the 1960s blueprints, digitize them, load them into modern CNC machines, and get a proven engine." But when they tried to do it, they ran into a wall. Something was missing.

Specialists went to museums, removed several preserved F-1 specimens from their pedestals, and began disassembling them literally bolt by bolt. And that's when they discovered the hardware didn't match the blueprints. Moreover, different engines didn't even match each other.

In the 1960s, machine precision was lower and automation was in its infancy. The lack of equipment precision was compensated by human skill. Every part was fitted by hand, on-site.

As they disassembled the engines, engineers found parts that had been drilled in unexpected places or bent differently than the diagrams showed. During assembly on the test stand in the 1960s, engineers would notice: "There's vibration here." They'd grab a drill, make an additional hole, test it — works better. Leave it. Changes weren't always entered into the documentation.

In sociology this is called tacit knowledge — implicit knowledge that cannot be conveyed through a manual. It's experience that lives at the fingertips of the craftsman. The people who built the F-1 were the best engineers of their time. Much was born in arguments right next to the test stand and never made it into official reports: "Why write down the obvious? We all understand here that you need to turn the valve half a turn." The problem is that these people retired, died, and took that knowledge with them.

After spending time on reverse engineering, NASA arrived at a paradoxical conclusion: restoring F-1 production in its original form was impossible. Entire industries would need to be recreated, people trained in forgotten techniques, supply chains rebuilt for materials no longer manufactured. It was simpler to design a new engine from scratch. So technology is not a blueprint in a safe. Technology is people, production culture, and supply chains. Break that chain for one generation, and knowledge becomes an archaeological artifact.

What Can Be Done?

A world as black box, technology as magic, lost ancestral knowledge — if we stop here, the picture looks grim. But nihilism is a poor tool for an engineer. And recognizing the problem is already half the solution. The other half lies in a paradigm shift: we need to stop being passive consumers of "magic" and begin actively archiving, structuring, and spreading knowledge about how the world works.

Cross-Pollination of Knowledge

One of the main problems of modern engineering is narrow specialization. We dig deep wells but don't build tunnels between them. Yet the most elegant solutions often lie at the intersection of fields that seem completely incompatible at first glance.

In the early 2000s, doctors at London's Great Ormond Street Hospital faced alarming statistics. Despite the surgeons' exceptional skill, mortality and complications remained high during patient handoffs — when a child was transferred from the operating room to intensive care after heart surgery. Life support systems, monitors, and drips all needed to be reconnected, enormous amounts of data transferred — all under time pressure and stress.

The doctors realized their problem wasn't medical but logistical. And they began looking for who in the world was best at servicing complex equipment under stress. They ended up inviting Ferrari Formula 1 technicians to the hospital. They showed them video recordings of operations and asked: "What are we doing wrong?" The engineers, accustomed to changing four tires in 2–3 seconds, were horrified by the chaos in the operating room.

In a pit stop there's a person with a sign who alone has the authority to give the "Go" command. The operating room designated a similar leader. Protocols of silence were introduced at critical moments, a clear scheme for positioning people around the patient, and strict checklists with standardized signals.

The result: technical errors dropped by 42%, information transfer errors by 49%. Knowledge developed to make small cars go faster around a track saved children's lives.

Radical Openness

The second problem is that we bury our mistakes. Corporate culture favors publishing only success stories. Nobody issues press releases saying: "We spent 10 years, burned through $50 million, and came up with nothing." Yet a negative result is an invaluable engineering asset.

Makani, part of Google X, spent 13 years trying to revolutionize green energy. They created energy kites — carbon fiber gliders with rotors that climbed to heights of up to 500 meters, caught wind, and transmitted electricity to the ground via cable. In 2020 the project was shut down because solar panels and conventional wind turbines had become cheap enough that the complex kites were no longer competitive.

A typical corporation would have archived everything and laid off the engineers. Makani did something different — they published The Energy Kite Collection: source code, prototype blueprints, flight logs, detailed accident analyses, and a documentary about the project. I'm sure it was studied very carefully in China, where a third generation of flying electric generators is now being tested.

NASA, having learned from the F-1 engine experience, also changed its approach. They have the NASA Lessons Learned Information System portal, where engineers describe problems — from stuck solar panels to management errors. They record both "what broke" and the decision-making logic that led there. This is an attempt to preserve the wisdom that previously passed only in hallway conversations.

Conclusion: Technosphere or Magic?

Futurists love to talk about technological singularity in the context of creating strong AI that will begin to self-improve. But to me, singularity looks different. It's more like a complexity horizon — a moment when the total complexity of the technosphere exceeds humanity's cognitive capacity to fully understand it. And it seems we've already crossed that threshold.

No single person on Earth today can alone explain and reproduce the entire chain of technologies needed to manufacture a modern smartphone — from mining rare-earth metals to the operation of 5G network protocols. We've all become narrow specialists maintaining tiny fragments of a giant technological mosaic.

Now we're inserting into this foundation the most opaque brick of all — generative neural networks. Even the creators of ChatGPT, Claude, or Gemini face the problem of interpretability. An engineer knows the architecture: how many layers in the transformer, what activation function. But they don't know why, in response to a specific query, the model generated exactly that text — because the knowledge is smeared across billions of weights in the model's matrices.

We're learning prompt engineering — essentially the art of incantations. We select words so the "spirit in the machine" does what we need, without understanding the mechanics of the process. If a developer doesn't understand their own code (because Claude generated it), and the user doesn't understand the device, we find ourselves in a world where technology becomes a matter of faith, not knowledge.

Remember that uncomfortable feeling when you left your phone at home. You feel not just "disconnected" — you feel dumber. You've lost access to your exocortex — your external memory, navigator, reference library. We are already cyborgs; our implants just sit in our pockets rather than being embedded in our brains.

This symbiosis lets us operate with volumes of data Einstein or Newton could never have imagined. But as the stories of the F-1 engine and the ADE-651 detector showed, this symbiosis is fragile. If we delegate understanding to "smart assistants," we lose the ability to verify their work. If an AI tutor teaches a child an incorrect fact, will anyone notice? If an AI architect designs a bridge with an error, will a human be able to find it?

The risk is not robot uprising. It's competency collapse — the moment we become button-pushers incapable of repairing the system when the "magic" suddenly turns off.

What to do? Refuse the right to ignorance.

- Open hoods, take things apart. If you're a developer, look at the source code of a library. If you're a driver, understand how a differential works. Curiosity is the immune system against "magic."

- Demand proof. When someone sells you a miracle technology, keep asking "How does this work?" until you reach physics or mathematics. If the answer is "it's too complicated" — chances are someone is trying to deceive you.

- Preserve knowledge. Support open source. Write documentation. Share the experience of failure. Build tunnels between professional wells, so Formula 1 experience saves patients, and the knowledge of rocket engineers doesn't die in museum archives.

- Learn to draw the bicycle. Train your brain to understand mechanical and logical connections. Don't accept the world as given — accept it as an engineering problem.

We cannot stop the growth of complexity. We cannot abandon abstractions. But we must maintain control over them. Technology is not magic. It is crystallized human labor, intellect, and mistakes — let's not forget that.