Simplifying Is Hard: A Story of Failure

An astrophysicist builds a symbolic algebra system in Lisp to simplify 100,000-term equations generated by numerical simulation code — and documents every wrong turn along the way.

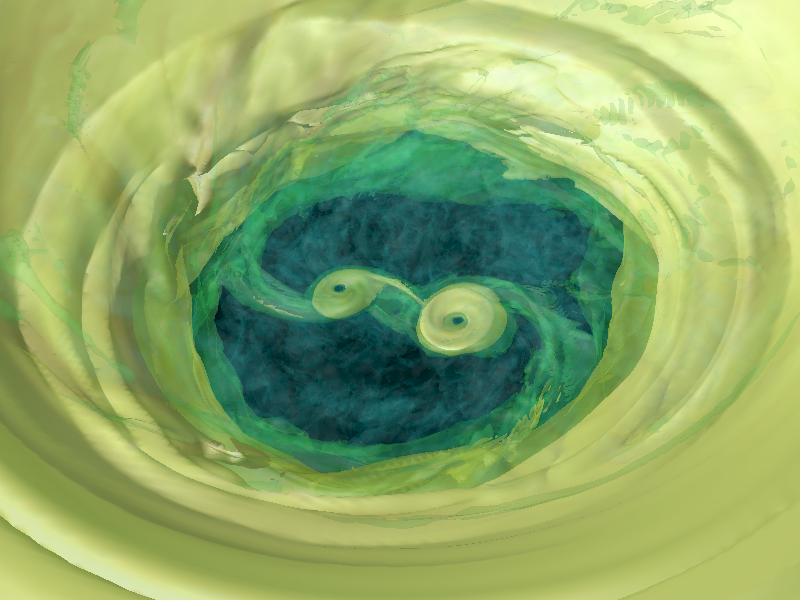

I am an astrophysicist. I build numerical models of gas dynamics around binary stars and exoplanets. A few years ago I decided to automate the generation of the simulation code itself — to take a high-level symbolic description of the physics and produce optimized, parallelized GPU code automatically. That required simplifying algebraic expressions. Some of those expressions have more than 100,000 terms.

This is the story of how I tried to do that, and all the ways it went wrong.

Why Lisp?

Mathematical expressions in Lisp look like this:

'(sqrt (+ A (* B (+ C D))))This is already a tree. Every expression is a list: the first element is the operator, the rest are arguments. Recursive algorithms over tree structures are idiomatic Lisp. There is no translation step between "expression" and "data structure" — they are the same thing. For symbolic mathematics, this is a significant advantage.

I started in Scheme, a Lisp dialect, then moved to Common Lisp (compiled with SBCL) for better optimization and broader platform support.

Attempt One: Pattern Matching in Scheme

My first approach used Maxima, the venerable computer algebra system. It was too slow and too limited for expressions with hundreds of thousands of terms. So I wrote my own simplifier: convert expressions to binary trees, apply a library of recursive rewrite rules, repeat until stable.

The behavior was unpredictable. Adding a new rewrite rule would sometimes cause memory to explode. Sometimes things slowed to a crawl. There was no guarantee the process would terminate. Despite generating code that actually ran correctly on the GPU, I abandoned the project.

Attempt Two: Common Lisp

The second attempt had a clearer structure. The core is a function I call extract-nums, which handles the arithmetic layer: compute all numeric sub-expressions, cancel terms multiplied by zero, remove multiplications by one, and consolidate numeric constants. Everything else builds on top of it.

The full simplification cycle works in three phases, repeated until the expression stops changing:

Phase 1: Expansion

- Flatten nested operations:

A+B+(C+D)+E→A+B+C+D+E - Distribute exponents:

(A*B*C)^x→A^x * B^x * C^x - Expand logarithms:

ln(A*B*C)→ln(A)+ln(B)+ln(C) - Run

extract-nums

Phase 2: Recombination

- Combine like powers:

X^a * X^b→X^(a+b) - Factor common subexpressions (the hard part — this consumed 80% of the debugging time)

- Detect negations: recognizing that

-(B+C)andB+Cmultiplied by-1are the same thing in different contexts - Run

extract-nums

Phase 3: Distribution

- Expand products:

(A+1)*(B+2)→AB + B + 2A + 2 - Run

extract-nums

Then repeat from Phase 1 until stable, followed by a prettification pass that converts -1*x back to subtraction and X^(1/2) to √X.

As a worked example, A*B/A proceeds as follows: rewrite as A*B*A^(-1), combine powers to get A^(1-1)*B, run extract-nums: A^0*B → 1*B → B.

Pattern-Based Differentiation

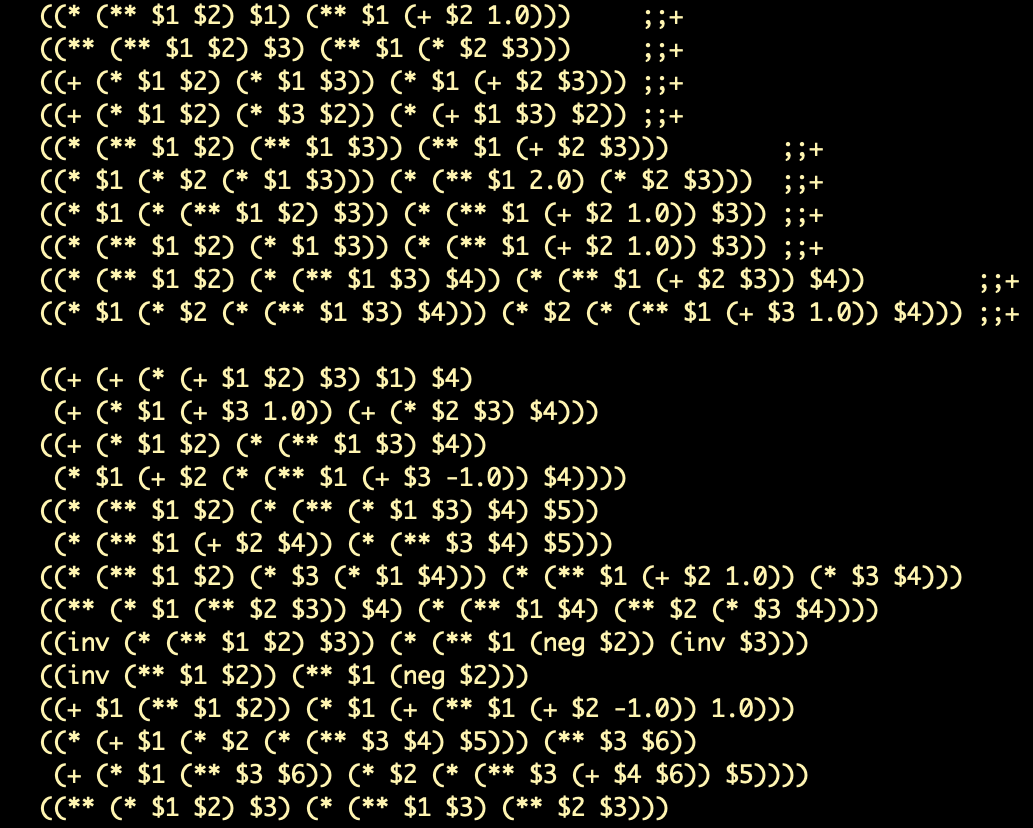

The system also includes symbolic differentiation via a template engine. The notation uses special sigils:

@— matches a constant$— matches any non-constant_— matches anything=>— separates pattern from replacement&rest var— collects remaining arguments

Some example rules:

(diff @ $) => 0) ;; derivative of a constant is zero

(diff _1 _1) => 1) ;; d(X)/dX = 1

(diff (exp $1) _2) =>

`(* (exp ,$1) (diff ,$1 ,_2)) ;; chain rule for expFor sums, using the rest-capture syntax:

(diff (+ &rest args) _1) =>

(cons '+ (mapcar (compose (curry #'cons 'diff) (rcurry #'list _1)) args))))The system applies rules recursively until no pattern fires. You can also inject custom templates on the fly:

(with-templates (((diff (foo $1) $2) =>

`(/ (- (foo ,(replace-subexpr $1 $2 `(+ ,$2 delta)))

(foo ,(replace-subexpr $1 $2 `(- ,$2 delta))))

(* 2 delta))))

(simplify '(diff (exp (foo x)) x) :no-cache t))And you can declare constants to allow further simplification:

;; Without constants declared:

(simplify '(diff (expt x y) x))

=> (* (+ (* (DIFF Y X) (LOG X)) (/ Y X)) (EXPT X Y))

;; With y declared constant:

(with-constants (y) (simplify '(diff (expt x y) x) :no-cache t))

=> (* Y (EXPT X (- Y 1)))Supporting Functions

Several helper functions round out the library:

equal-expr: Checks expression equivalence accounting for different orderings of commutative terms.extract-subexpr: Isolates an expression into the formA*E^n + B, useful for solving simple equations.get-polynome-cfs: Converts an expression into polynomial coefficient form.replace-subexpr: Recursively substitutes one subexpression for another.split-to-subexprs: Identifies frequently recurring subexpressions and extracts them into named variables. (There is currently a known bug here that has not yet been fixed.)

What I Learned

The project is available on GitHub under the MIT license as symath — use with caution. The README notes that GitHub analytics show a steady trickle of downloads from organic search. I suspect most of those people are looking for something else with a similar name. If that is you, please leave a comment.

The honest summary: simplifying algebraic expressions algorithmically is much harder than it looks. The problem is not writing rules; it is that rules interact. A rule that simplifies expression A can temporarily complicate expression B in a way that prevents a different rule from firing. Guaranteeing termination is non-trivial. Guaranteeing optimality is harder still.

Maxima has been working on this problem since 1967. I spent two attempts across two languages. Maxima is still winning.

FAQ

What is this article about in one sentence?

This article explains the core idea in practical terms and focuses on what you can apply in real work.

Who is this article for?

It is written for engineers, technical leaders, and curious readers who want a clear, implementation-focused explanation.

What should I read next?

Use the related articles below to continue with closely connected topics and concrete examples.