Simplifying Is Hard: A Story of Failure

An astrophysicist recounts two attempts to automate symbolic expression simplification in Lisp — first failing with pattern-matching in Scheme, then building a working library in Common Lisp through iterative decomposition and recomposition.

In my work, I frequently (well, practically always) deal with numerical modeling of gas dynamics (less often MHD), typically three-dimensional and, importantly, quite demanding computationally. Talking about numerical models could take a while, but in this article that would be excessive, so I'll try to summarize their essence in a few words.

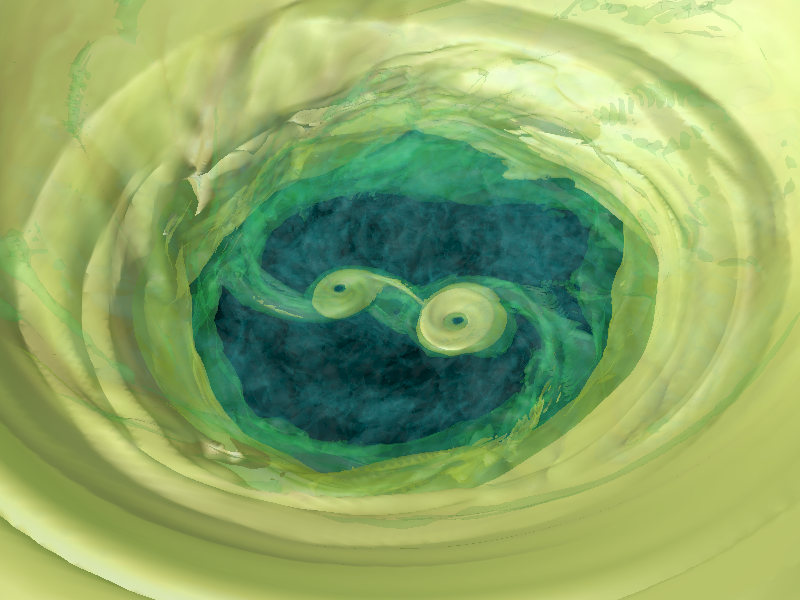

Imagine a region of space filled with gas. Somewhere inside it there's a pair of stars creating a gravitational field, and also (usually) one of them serves as a gas source, losing it from its surface, for example as wind.

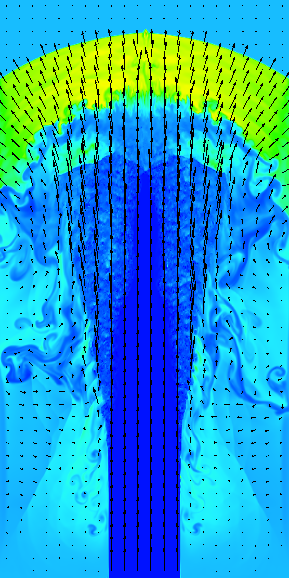

We divide space into cells, usually rectangular, small enough that gas parameters (density, velocity, temperature) within them can be considered constant. Then we divide time into intervals (steps), also small enough that little changes within the system during a single step. And then the computation begins — knowing the gas parameters in two neighboring cells, we can use complex systems of equations to compute the flux between them and determine how the gas parameters should change over one time step.

For everything to work well, there need to be many cells and short time steps. The more cells and shorter steps, the higher the accuracy and the finer the effects you can capture. Already 20 years ago, our models became so heavy that we moved from personal computers to supercomputers, and now even those aren't enough. So optimization questions for the codes we use are extremely pressing. A gain of even a few percent in speed can save days of computation using hundreds of processor cores. And at some point I thought: why not automate the writing of numerical codes?

Attempt One

Seems like a great idea. We take a system of equations, take a numerical scheme, combine one with the other (in practice this looks like multiplying matrices and vectors), set the grid parameters, parallelization method, initial and boundary conditions, press a button, and the computer gives us ready-made code — optimal and parallelized. All that's left is to run it. What could go wrong?!

A small digression. Although programming isn't my specialty, I program quite a lot. The fact that the result of my work is not a program but its output allows me to freely use tools that would get you hit on the head just for mentioning them in respectable organizations. So by that point I had already written something useful in about 15 different programming languages and had also "touched" about ten more. When I wanted to play with symbolic mathematics, without any embarrassment I chose the most suitable Lisp-like language — Scheme. The parenthetical syntax turned out to be incredibly convenient for representing formulas; they were easy to manipulate, and I generally liked the language.

So fairly quickly I entered the necessary formulas, Roe and Osher schemes, Einfeldt's entropy correction, wrote a set of simple functions for multiplying various vectors and matrices, came up with a fun way to avoid branching, launched it, and... for the three-dimensional ideal gas dynamics scheme, I got equations of epic proportions. Some had over 100,000 terms. This puzzled me. Careful inspection showed that the equations had, so to speak, simplification potential. Quite substantial chunks were being multiplied by zero, for example. And other parts could be reduced against each other. I need to simplify, I thought.

First I took the easy path. I installed Maxima — a computer algebra system — to which I automatically passed expressions for simplification. But the result didn't satisfy me. First, it worked very slowly; second, I could see with my own eyes how the resulting equations could be simplified further. And I bravely set about writing code that would simplify expressions the way I wanted.

So what do we have? We have expressions written in the prefix notation characteristic of Lisp. For example, the expression √(A+B(C+D)) would be written as:

'(sqrt (+ A (* B (+ C D))))For those unfamiliar with Lisp, let me explain a bit. Parentheses delimit a list of elements, which can themselves be lists. Elements are separated by spaces. A, B, C, D, and sqrt are so-called symbols — unique entities that have names. Moreover, + and * are also symbols. In principle, a list element can be anything, but for simplicity let's say our lists will only contain symbols and numbers.

This way of writing expressions has a great advantage. First, Lisp was created for working with lists, and if our expression is a list, it's convenient to manipulate. Second, these lists have a uniform format. For example, in C, expressions can be of the form cos(x) — function name and parameters in parentheses, separated by commas — or of the form a+b-c+d where operators separate operands. Just parsing such an expression (and it can have parentheses too! And some of them, but not all, delimit function parameters!) could be a thesis topic, let alone convenience. In Lisp, the first element of a list is always the function designation, and all others are its parameters.

So we have expressions as lists, time to think about how to simplify them. First, I decided to get rid of subtraction and division. I've disliked them since childhood. Indeed, why subtract and divide when you can add and multiply? On a serious note, these functions can have an arbitrary number (>1) of parameters that can be swapped, except for the first. Untidy. Getting rid of them is quite simple:

A−B−C−D ⇒ A+(−1)×(B+C+D)

A/B ⇒ A×B^(−1)

With a flick of the wrist, subtractions become additions and divisions become multiplications!

Then I went down a somewhat wrong path, as it later turned out. I decided to further unify expressions by leaving at most two operands. That is, performing transformations like:

A+B+C+D+E ⇒ A+(B+(C+(D+E)))

This seemed like a good idea. Indeed, if we previously had a list of lists — a tree — why not turn it into a binary tree, the simplest and most natural form? Then, according to my plan, I'd write templates like:

A+(B+(−1)×A) ⇒ B

which I'd apply recursively until no template could be applied. Recursion, just so you know, is no less characteristic a Lisp feature than lists. When we say Lisp, we mean recursion! So I created simple functions for pattern search and replacement and started writing these patterns. I'd look at expressions with my eyes, find a simplifiable chunk, and add the corresponding pattern. Run it, get a new expression, look at it again, and so on. I was persistent and believed in myself.

Overall, things went okay. Where simple patterns weren't enough, I inserted workarounds in code. Lisp was originally designed as a language for writing artificial intelligence, and at the time it was believed that AI would be strictly algorithmic, just a very complex algorithm. Lisp is well-suited for complex algorithms that include an unlimited number of workarounds. This suited me perfectly! But the more patterns I wrote, the more unpredictable the program's behavior became. Sometimes adding a fairly innocent pattern led to a many-fold increase in running time or a sharp spike in memory consumption, and the expressions didn't necessarily become simpler — often the opposite happened.

Overall, I managed to achieve more-or-less satisfactory results, generate optimized parallel code (even for GPU!) and compute several test problems quite successfully. But globally, of course, it was a failure. Expression simplification turned out to be a task far more complex than I had envisioned. I abandoned the project, but didn't forget it.

Attempt Two

Several years passed. All those years, a worm of dissatisfaction gnawed at me. After all, what tripped me up was a problem that seemed trivial — reducing terms in an expression. Third-graders handle this easily! So at some point my patience snapped and I took up Lisp again.

This time I decided to write the code not in Scheme but in Common Lisp (CL). The main reason: CL has good compilers. Yes, Scheme compilers exist too, but I couldn't find one that was a) cross-platform, b) well-optimizing, and c) free. For CL, which is a direct descendant of the original Lisp, there are excellent cross-platform compilers, the most common being SBCL.

Diving into the freewheeling, anarchic Common Lisp after the laconic, academic Scheme, I felt like a conservatory graduate attending their first rock concert. A special role was played by the book Common Lisp Recipes, which can only be compared to a book of black magic, after the white magic book SICP. At first, much about this language seemed superfluous, unnecessary, clumsy, and bulky. However, after a while I got into it and discovered that over its more than half-century history, this language had acquired — I don't even know how to say it — a kind of completeness?

Every rake that could be stepped on had already been stepped on by someone before you, stepped on so many times that even the language standard now includes special soft rubber pads for the handles. And these are precisely the things that seemed strange and excessive to me during my first encounter with CL, but proved very useful later. In short, I won't hide it — I fell in love with Lisp for the second time.

So I started from a clean slate, armed with the invaluable experience from my first attempt. First I perform all matrix and vector operations so as to obtain the resulting matrix or vector, whose elements can be worked with independently. Then, of course, I eliminate subtractions and divisions as shown above. And then all the under-the-hood magic begins.

I decided that the first stage should compute everything computable in the expression. That is, if we have the expression A+B+10+20+0×C, we must turn it into A+B+30, summing the numbers and discarding everything multiplied by 0, removing 1 where multiplication by 1 occurs, etc. Let's call this procedure extract-nums. As it turned out, this is the most important procedure in the entire simplification — essentially, simplification happens within it, and everything else is just transforming expressions so that extract-nums can discard what's unnecessary.

Next, if you recall, previously I converted everything to binary tree form, but this time I went in the diametrically opposite direction — I collect same-type operations by opening parentheses:

A+B+(C+D)+E ⇒ A+B+C+D+E

Then I expand powers:

(A×B×C)^x ⇒ A^x × B^x × C^x

X^(a+b+c+d) ⇒ X^a × X^b × X^c × X^d

Logarithms are also broken into parts:

ln(A×B×C) ⇒ ln(A)+ln(B)+ln(C)

ln(X^y) ⇒ y×ln(X)

And then, paradoxically, I reassemble expressions under parentheses:

X^a × X^b ⇒ X^(a+b)

...and run extract-nums again! Where's the logic? Here it is:

A×B/A ⇒ A×B×A^(−1) ⇒ A^(1−1)×B ⇒ A^0×B ⇒ 1×B ⇒ B

This is a simplified example not requiring expansion, but showing how numerator and denominator factors cancel out. Notice that A only dropped out in the final stages, in extract-nums!

And then I... expand parentheses again! But this time in multiplications:

(A+1)×(B+2) ⇒ AB+1B+2A+2

And again extract-nums to discard the excess. After that, the most complex and blood-draining function begins working — it factors out common terms:

A+B+C−A ⇒ A(1−1)+B+C ⇒ 0A+B+C ⇒ B+C

And then, again, extract-nums reduces terms in sums. Seems simple? Here's the thing:

A×(B+C)+B+C+D ⇒ (A+1)×(B+C)+D

Notice that B+C appears here both as an expression in parentheses and as part of the sum B+C+D. That is, simple expression comparison when searching for common terms won't suffice — we also need to look for subexpressions within sums. But that's still just the flowers; here are the berries:

A×(B+C)−B−C

Here B+C appears as −(B+C), so we need not just to search for subexpressions but also their negated versions. It gets even worse:

A×(B−C)−2B+2C

Factoring out is pain. About 80% of debugging time went into this piece. To give you a sense of the scale: the procedure is also recursive, factoring out more than one subexpression, but this isn't the kind of recursion used elsewhere — where we just traverse the tree. Here we first traverse the tree, then recursively factor out expressions at the root, which can itself be part of the previous recursion. But even this I managed to debug. Yet another reason to love Lisp and functional programming.

Factoring out common variables is like crossing the equator. Before it, we're essentially breaking the expression into parts; starting from it (well, slightly before, but anyway), we're reassembling. For example:

X^a × Y^a ⇒ (X×Y)^a

This is done not just for aesthetics but also counts as simplification since it reduces the number of operations. And then... we repeat everything from the beginning. Again break down expressions, again factor everything out, again extract-nums reduces everything... Until the expression stops changing. Then we do cosmetic work. Restore subtractions and divisions, turn X^(1/2) into √X, and so on. Done. Simplified.

I've published the symath library that does all this and some other things on GitHub, MIT license. Use with caution!

Wait, What About...?

What about trigonometry, for instance? What about the well-known identity sin²X + cos²X = 1? Can't we account for it? The tree-and-pattern approach was somewhat more flexible in this regard... Everyone stay calm! Patterns are already here! But I've slightly changed my approach to them. For now, they're used only for differentiation.

As my university professor used to say, even a monkey can be taught to differentiate. The rules are few and simple. And it's only natural to use patterns for this. This time my patterns look like this:

(diff @ $) => 0) ;; Derivative of a constant is always 0

(diff _1 _1) => 1) ;; Derivative of X with respect to X is always 1

(diff (exp $1) _2) =>

`(* (exp ,$1) (diff ,$1 ,_2)) ;; Derivative of exponentialOr even like this:

(diff (+ &rest args) _1) =>

(cons '+ (mapcar (compose (curry #'cons 'diff)

(rcurry #'list _1)) args))))Don't be alarmed, this is also Lisp. You can invent a convenient syntax, implement it using language facilities, and then write code that way. For patterns I came up with the following notation:

@— a constant (not necessarily a number)$— anything that's not a constant_— absolutely anything=>— separates the pattern and the replacement&rest var— put all remaining members of the expression into variable var as a list@1,$X,_Y— place what matches the pattern into variables

If there's no suitable pattern for differentiating some exotic function, it stays under diff:

(simplify '(diff (exp (foo x)) x))

=> (* (EXP (FOO X)) (DIFF (FOO X) X))But you can add your own pattern for that function via the with-templates macro:

(with-templates

(((diff (foo $1) $2) =>

`(/ (- (foo ,(replace-subexpr $1 $2 `(+ ,$2 delta)))

(foo ,(replace-subexpr $1 $2 `(- ,$2 delta))))

(* 2 delta))))

(simplify '(diff (exp (foo x)) x) :no-cache t))Here we're replacing the derivative of function foo with a central finite-difference derivative using the replace-subexpr function from the same symath library. By the way, notice that Lisp has a rational type — 1/2 is not 0.5!

Differentiation, incidentally, turned out to be not such a simple operation. I recently needed (don't ask) to expand a Taylor series in two variables for a function that had several terms of the form:

exp(−(x−a)² + (y−b−c(sin(sin(sin(d+ex)))))²)

Just computing the fifth derivative required several hours and a couple of gigabytes of memory.

What Else Is There?

The symath library has a few more useful functions. One of them, replace-subexpr, has already appeared above — it's needed for replacing one subexpression with another, simply traversing the tree recursively and replacing everything equivalent from equal-expr's perspective.

The function extract-subexpr is more interesting. It lets you "isolate" a certain expression — bring the original formula to the form A×E^n + B, where E is the target expression, and A, n, and B are expressions that don't explicitly contain E. As you can easily guess, this function can find trivial solutions to some simple equations. But it doesn't quite qualify as a full solver, so I didn't have the audacity to call it solve.

Calling this function recursively, you can represent an expression as a polynomial in E. That's what the function get-polynome-cfs does, returning polynomial coefficients as an association list. I needed this function when I had to find the intersection of a cone with a plane — I was calculating the star occultation coefficient by an exoplanet for cells in the computational domain.

Another useful feature is the function split-to-subexprs. This function, as you can easily guess, extracts the most frequently occurring chunks from an expression into separate variables. (Warning! I recently discovered it's buggy! Not yet fixed.)

One more useful thing — checking expression equivalence, more reliable than the basic equal-expr. To check whether expressions E₁ and E₂ are equivalent, simply simplify the left sides of the following and check their truth:

E₁ − E₂ = 0

E₁/E₂ = 1

If at least one case yields 0 or 1, the expressions are equivalent. Why check both? The library, alas, isn't all-powerful and isn't yet sophisticated enough to fully reduce everything in both cases. But I'm working on it!

Instead of a Conclusion

Honestly, I didn't think there would be enough people wanting to use this little library. At minimum, Lisp isn't that popular, it seems to me. However, GitHub statistics show that people constantly come to this repository from Google searches, and someone clones it several times daily (about ten unique cloners per week!). I have serious suspicions that people are searching for something else with a similar name. If anyone reading this article knows what they actually need — please write in the comments! I'd be very curious to find out :)