I Trusted ChatGPT in Construction for a Year, Then It Invented Building Codes

A construction professional describes how ChatGPT fabricated nonexistent building codes and standards, leading his team to build a RAG-based AI system with verified regulatory documents to solve the hallucination problem.

My name is Alexey Krivonosov, and I work in suburban construction. For the past year, I've been actively using ChatGPT for various work tasks. It helped me draft estimates, compose client communications, and even plan construction stages. But one day, everything changed.

The Problem: ChatGPT Invents Building Codes

The neural network was fabricating nonexistent sections of regulations and producing numbers that didn't exist in any documents. For example, the model would give a specific insulation thickness value (150 mm) and reference a nonexistent section of a document. It looked completely convincing — proper formatting, official-sounding language, specific numbers. But when I went to verify it against the actual regulatory document, that section simply didn't exist.

This is a critical problem in construction. If you use incorrect insulation thickness, incorrect concrete grades, or wrong reinforcement spacing — you're not just making an aesthetic mistake. You're creating a safety hazard. Buildings can crack, roofs can collapse, and people can get hurt.

Why Does ChatGPT Hallucinate?

ChatGPT works with probabilities, predicting the next word. The model doesn't verify facts directly and has context window limitations. When asked about a specific building code, it doesn't look up the actual document — it generates text that looks like it could be from such a document, based on patterns in its training data.

This is especially dangerous in regulated industries where specific numbers, formulas, and references carry legal weight. A hallucinated GOST reference in a construction project could pass through reviews unnoticed and lead to real structural failures.

The Solution: Building a "Digital Standard"

Over six months, my team and I developed our own AI tool based on RAG architecture (Retrieval-Augmented Generation). Instead of relying on the model's "memory," we built a system that retrieves actual regulatory documents before generating answers.

Stage 1-2: Finding the Solution and Assembling the Team (Mid-2025)

We researched existing solutions and found nothing that adequately addressed construction regulations. Most AI tools were general-purpose and suffered from the same hallucination problems. So we decided to build our own.

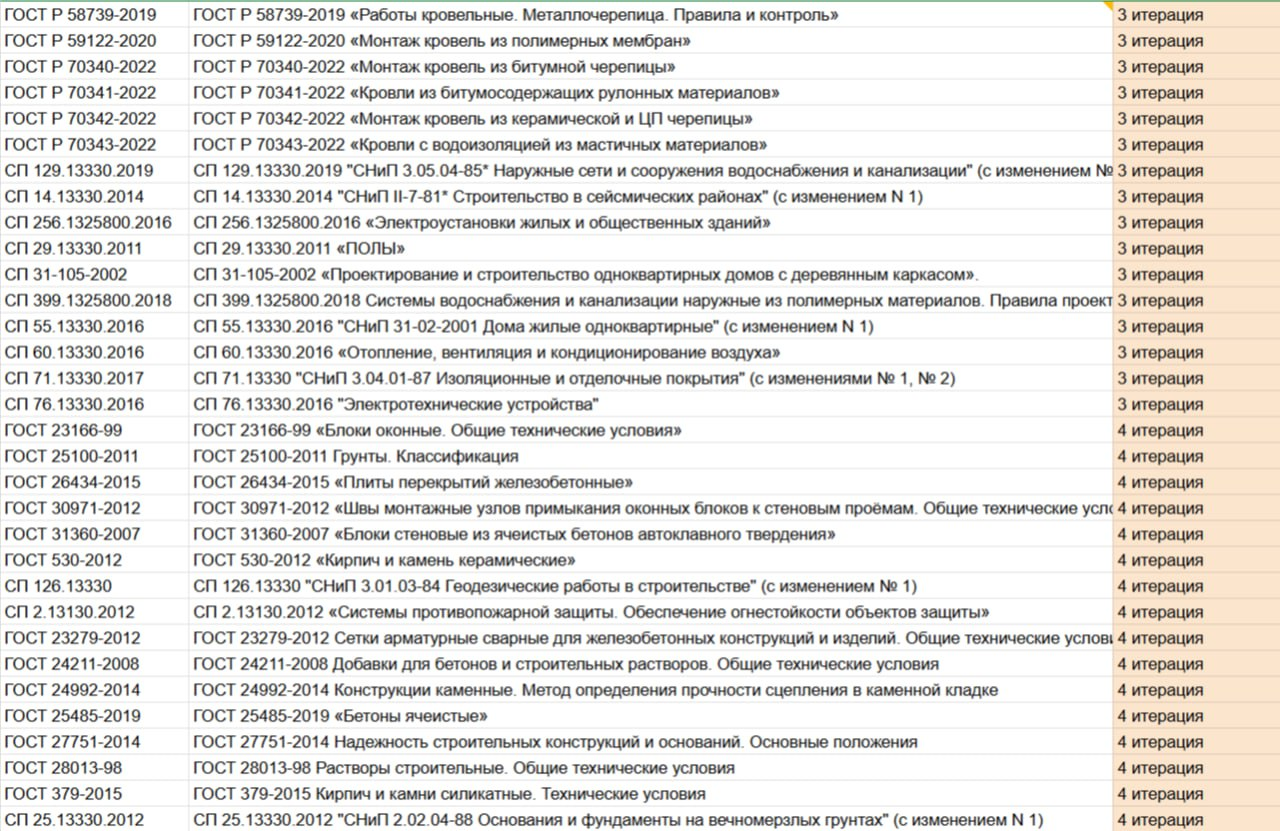

Stage 3: Six Months of Manual Document Processing

This was the most labor-intensive phase. Our team manually broke down regulatory documents into logical blocks (chunks):

- Over 5,500 semantic fragments were created

- Each fragment was tagged with metadata: document name, section number, topic

- All fragments were converted to vector format for semantic search

The formula problem: Mathematical formulas in PDFs are recognized incorrectly by standard OCR tools. Fractions, subscripts, and special symbols get mangled. Our solution was to convert all formulas to LaTeX format, which preserves mathematical notation precisely.

Stage 4: Algorithm Configuration

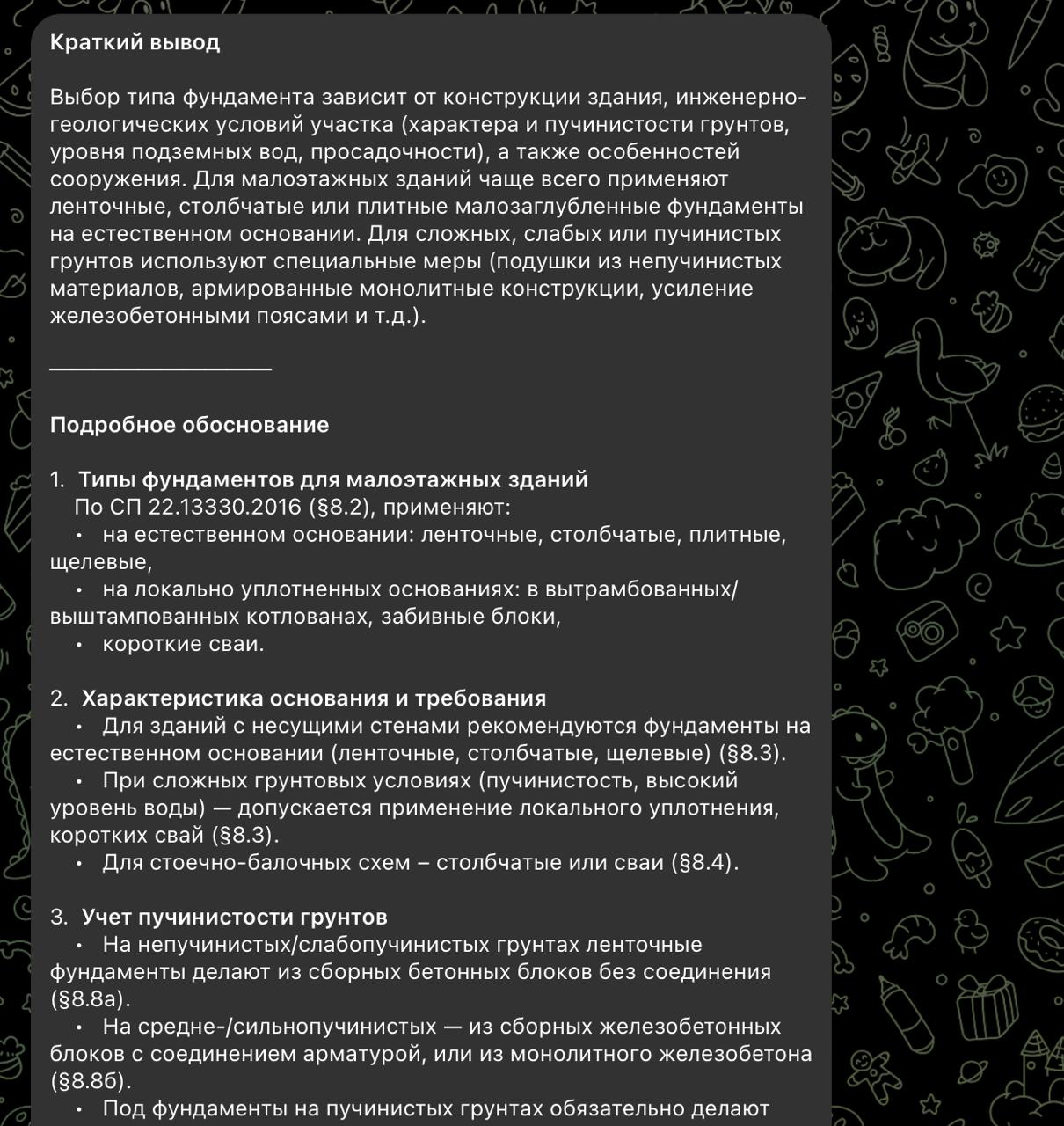

Here's how the system works:

- The user asks a question

- ChatGPT normalizes the query into a search-optimized form

- The system searches for relevant chunks in the vector database

- The model generates an answer only based on the retrieved data

- The answer is provided in three variants: brief, medium, and expert-level

The key difference from vanilla ChatGPT: our system cannot hallucinate regulatory references because it only cites documents that actually exist in our verified database. If the answer isn't in the database, the system says so honestly.

Technology Stack

For AI processing:

- ChatGPT-4.1 for generating answers

- OpenAI text-embedding-3-large for vectorization

For development:

- Qdrant — vector database

- Node.js and TypeScript — primary stack

- Python — individual modules for document processing

- LaTeX — mathematical formula preservation

Architectural decision: We built practically everything from scratch, without using off-the-shelf RAG frameworks like LangChain. This gave us full control over chunking strategy, retrieval logic, and answer generation — critical when dealing with regulatory documents where precision matters.

Launch and Results

On December 31, 2025, we launched the product for public access. The first paying customers appeared almost immediately. Construction professionals finally had a tool they could trust for regulatory questions.

Future Plans

- Different user roles (developer, inspector, foreman) with tailored answer depth

- API for integration with construction management software

- Automated updates to the document database as new regulations are published

- Expanding the vector database to cover more regulatory domains

Conclusion

This project demonstrates that for mission-critical tasks, standard LLMs require specialized architecture with verifiable data sources. ChatGPT is an incredible tool for many things, but when human safety depends on the accuracy of specific numbers and references, "probably correct" isn't good enough. You need a system that can point to its sources and say: "This answer comes from GOST 12345-2020, section 5.3, paragraph 2" — and you can verify it yourself.