I Saw the Future of Content. And It Is No Good

The author stumbles onto a YouTube channel generating 70+ polished AI-made documentary videos every three weeks — each racking up millions of views, zero comments, and appearing alongside legitimate content in recommendations. A personal essay on what algorithmic content flooding means for the future of the internet.

It started with a documentary about Amelia Earhart.

I was watching YouTube in the evening — the kind of passive browsing where you follow recommendations without thinking too hard. A video appeared about the disappearance of Amelia Earhart. It had five million views. The thumbnail looked professional. The topic is genuinely interesting, so I clicked.

The video was — how to put this — vaguely competent. Historical footage, interview clips, a narrator reading facts I'd heard before. The kind of thing that feels like a documentary but you can't quite say why it feels slightly off. I watched about eight minutes and then, on a whim, checked the channel.

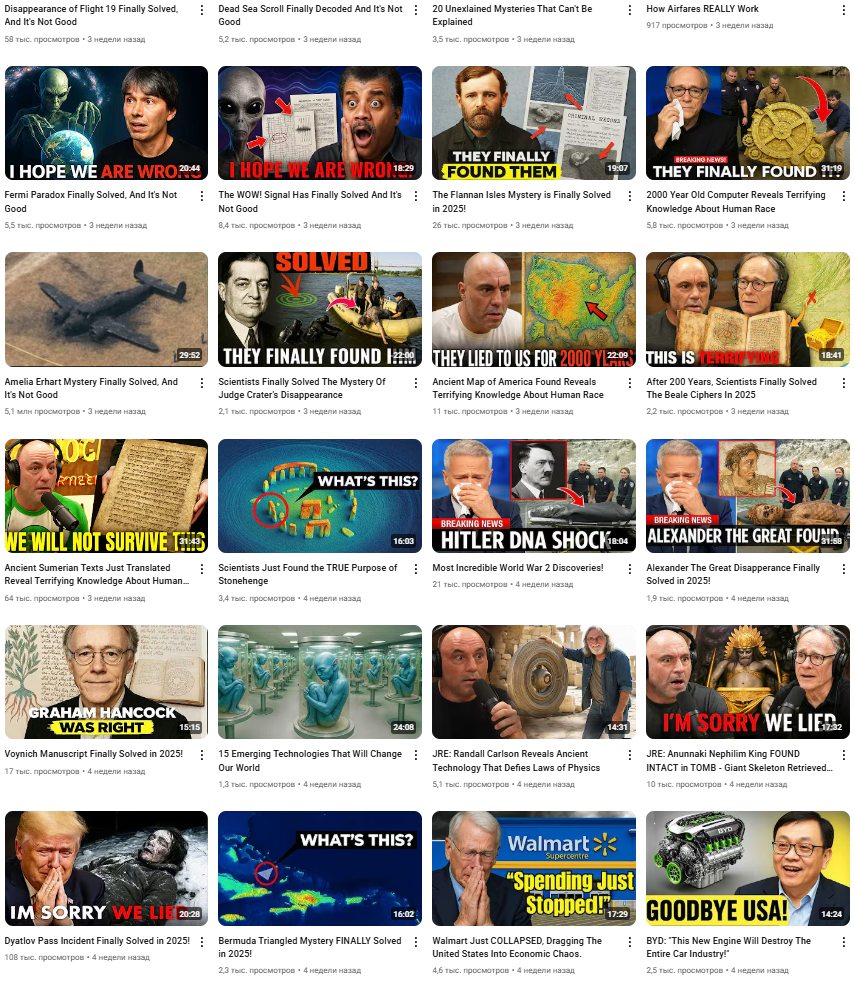

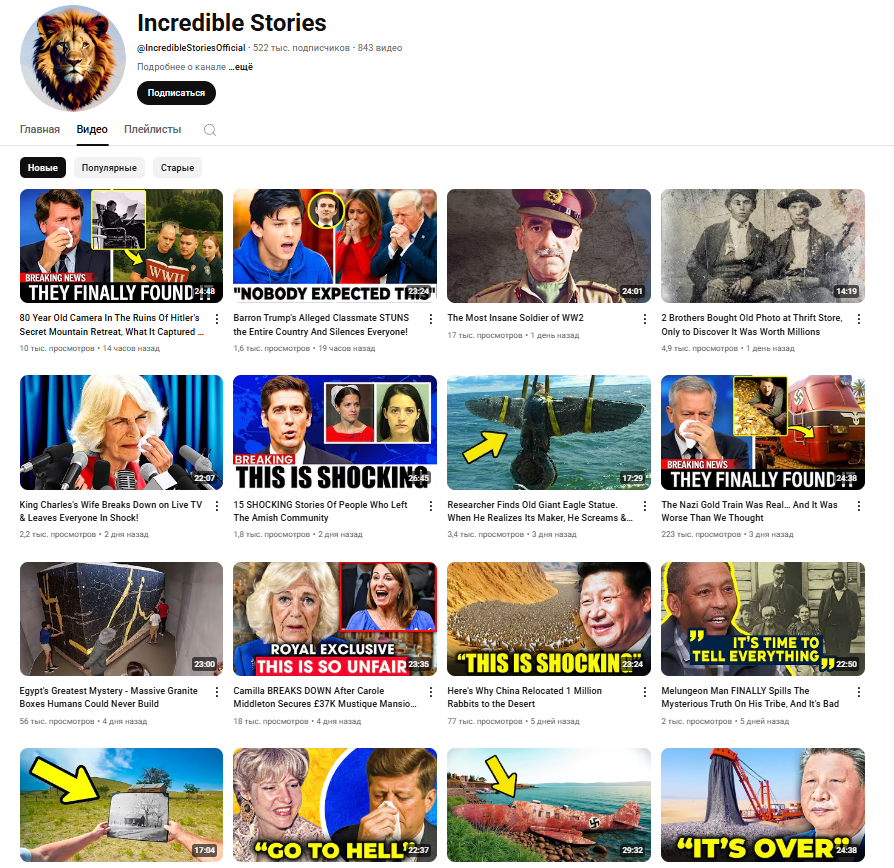

The channel had uploaded more than 70 videos in three weeks. All of them were over twenty minutes long. All of them had polished thumbnails. The titles ranged from "The Unsolved Mystery of Flight 370" to "Crying Trump at Dyatlov Pass" — a combination of real historical events and surreal mashups that made no sense as genuine documentary topics. They were being generated, stably, every few hours.

Zero Comments

Here is the detail that I keep coming back to: the Earhart video had five million views and zero comments. Not a few comments, not comments disabled — zero. On a five-million-view video about one of the most discussed mysteries in aviation history. On YouTube, where comment sections on cat videos have thousands of entries.

That number is not organic. Five million views with zero comments means the views themselves were manufactured — almost certainly through some combination of botnet activity and algorithmic amplification. The channel had "accumulated view counts and subscriber counts" that were themselves likely artificial. The content existed to appear popular, not to be watched.

Why the Algorithm Doesn't Care

The YouTube recommendation algorithm optimises for engagement signals: watch time, click-through rate, session continuation. A video that is thirty percent watched by five million accounts — real or bot — produces strong signals by those measures. The algorithm cannot distinguish between genuine curiosity and scripted interaction. It sees numbers and promotes accordingly.

This means AI-generated content, combined with artificial view inflation, can successfully colonise recommendation feeds. When I opened the channel, several of the videos were appearing alongside legitimate documentaries in YouTube's "Up next" sidebar. The algorithm had categorised them as belonging to the same content cluster and was routing real viewers toward them.

The Economic Logic

Consider the production economics. A legitimate documentary team — researchers, scriptwriters, archival researchers, a narrator, editors — might spend three to six weeks producing a single twenty-minute video. The AI pipeline produces the equivalent in hours, with no human labour beyond running inference and rendering. Even if YouTube's monetisation rates are low per view, at five million views per video with seventy videos in three weeks, the revenue is not trivial.

More importantly, YouTube rewards consistency. Channels that post regularly get algorithmic preference. A human creator producing genuinely researched content can manage one or two videos per week if they are fast. An AI pipeline can post every few hours without degradation. The platform's own incentive structure favours the machine.

This Is Already Happening on Legitimate Channels

The channel I found was an extreme case — blatant, high-volume, probably operating outside YouTube's terms of service. But a version of this is already standard practice on channels that are nominally legitimate. AI-generated voiceovers, AI-assembled stock footage, AI-written scripts with minimal human editing: these are increasingly the norm for "content farm" channels that have existed for years but can now operate far more cheaply.

The difference between the botnet channel and the legitimate-looking one is degree, not kind. In both cases, a person sitting down to make something real — to research a subject properly, to write something that reflects genuine thought — is competing against a pipeline that produces faster, more consistently, at essentially zero marginal cost per video.

Infrastructure Strain

There is also a purely technical dimension. Video at scale is expensive to store. One major platform already announced it cannot store all the Shorts users upload — requiring automatic deletion of old content. If AI pipelines can generate hour-long videos at terabyte scale, the storage and bandwidth economics of video hosting change fundamentally. Platforms will need to make choices about what to keep, and those choices will be driven by engagement metrics — the same metrics that AI-generated content is already gaming.

What I Don't Know

I want to be honest about the limits of what I observed. I don't know if the channel I found was a sophisticated monetisation operation, a research project, an experiment in adversarial machine learning, or something else entirely. It could be that YouTube has already detected and suppressed operations like this, and what I saw was an artifact of the detection lag. Platforms are aware of these dynamics and are actively working against them.

What I do know is that the technical barriers to what I witnessed are not high and are getting lower. The compute required to generate a twenty-minute narrated video with stock footage has fallen dramatically over the past two years. The tools are accessible. The economic incentive exists. The algorithmic environment rewards the behaviour.

The Larger Fear

I don't fully understand what I witnessed — perhaps an attempt at view manipulation that platforms will stop soon, or perhaps the birth of a new content niche. But here is what worries me most: the internet built its epistemics on a rough assumption that popularity correlates with quality, or at least with genuine human interest. If both popularity signals and content itself can be manufactured at scale, that assumption breaks.

What happens to trust when you cannot assume that a video with five million views was watched by five million people who chose to watch it? What happens to the concept of "what people care about" when caring can be simulated? The internet could become a landfill of generated information in which facts and original human content represent a couple of percent of the total volume.

I hope Pareto's principle holds — that some proportion of quality content remains findable and dominant. But I am not sure it will, if the ratio shifts from 80/20 to something like 98/2 in favour of generated noise. That is what I saw the beginning of. And it is no good.