I Drew Food by Hand for 15 Years, but Then a Neural Network Came and Changed Everything

A mathematician-turned-food-illustrator shares how 15 years of hand-drawn commercial illustrations gave way to a hybrid workflow with Midjourney and Photoshop, where neural networks excel at catching fleeting moments but still can't draw a proper Turkish coffee pot.

I Drew Food by Hand for 15 Years, but Then a Neural Network Came and Changed Everything

This story was told to my blog by Anna Makarova, a mathematician and food illustrator.

The Faculty of Computational Mathematics and Cybernetics, and the "Crisis of Authenticity"

I studied at the physics and mathematics school affiliated with Novosibirsk State University, and then enrolled at the same university's mathematics department. But due to family circumstances, I moved to Moscow and transferred to Moscow State University's Faculty of Computational Mathematics and Cybernetics (CMC).

By the end of my studies, it became clear that a career in science wasn't quite what I wanted. Olympiad problems and mathematical puzzles were one thing, but serious numerical methods were something else entirely. And then a revolution happened in publishing: the mass transition to computer workstations. Publishing houses urgently needed specialists who could work with computers. By that time, my second side had emerged — the artistic one. I had been drawing since childhood, but it had always taken a back seat to the exact sciences.

That's how I ended up at the editorial office of the magazine "Screenplays." They had a brand-new Apple Macintosh, and nobody understood how to approach it. They needed someone who understood computers and was interested in graphic design. For the first day, I didn't know what to do or how to start working with layout software. I asked to spend the weekend learning the machine — it turned out to be a case of "fake it till you make it."

Later I worked at other publishing houses, print shops, and eventually at major advertising agencies. We did all kinds of advertising — from cosmetics to automobiles. In parallel, I earned a second degree — "Design of Mass Media and Communication" (also at MSU). I defended my thesis on "The Crisis of Authenticity in Contemporary Mass Culture" (this was before neural networks even existed!).

In 2014, I went freelance. I needed to choose a specialization — it's impossible to do everything equally well. I analyzed my experience and realized that my strongest skill was food zones for packaging. Colleagues noticed it; art directors praised my fruit compositions. I made that my focus.

The Specifics of Food Illustration

The main secret of a good edible picture is appetizingness. It's like the sparkle of facets in jewelry — nobody needs dull diamonds, and nobody needs unappetizing food.

But while a lack of sparkle in jewelry can be easily described in words, appetizingness is more complex. Different foods are made appetizing by different things. In fact, only manifestations of unappetizingness exist, and if you remove them all, the image comes alive:

- Gray tones ruin the impression — food should be vibrant

- Crooked shapes are off-putting — food should be even

- Inaccuracy in details raises suspicion

- Wrong highlights make food look "plastic"

- Unnatural shadows kill volume

- An overly perfect image provokes distrust

Neural Networks — A New Way of Working with Images

I've been working with neural networks for three years now, and the main thing I've understood is that this isn't just a new tool. It's a fundamentally different way of creating illustrations.

Previously, for creating food illustrations I had several paths:

- Photograph the product myself

- Get photo materials from the client

- Create an illustration from scratch using sketches or references

With the advent of Midjourney, the process changed. Now I start with generating base images. But it's not "press a button — get a result." Neural networks are capricious; the image isn't fully controllable. You need years of illustrator experience to bring a generated image up to commercial quality.

In practice, I now generate source images in Midjourney and then bring them to completion in Photoshop, relying on experience. It's similar to the old process where I used photographs as a base — only now I have much more control over the source material.

Currently I work in two modes:

- The main work happens in the neural network, with Photoshop as backup: I fix errors, refine details to the required quality

- The main work happens in Photoshop, with neural networks as backup: I generate image fragments in Midjourney, retouch with Adobe Firefly built into Photoshop

The second mode has nothing revolutionary — it's just an update to the tools. It only affects the speed of work, sometimes very significantly. For example:

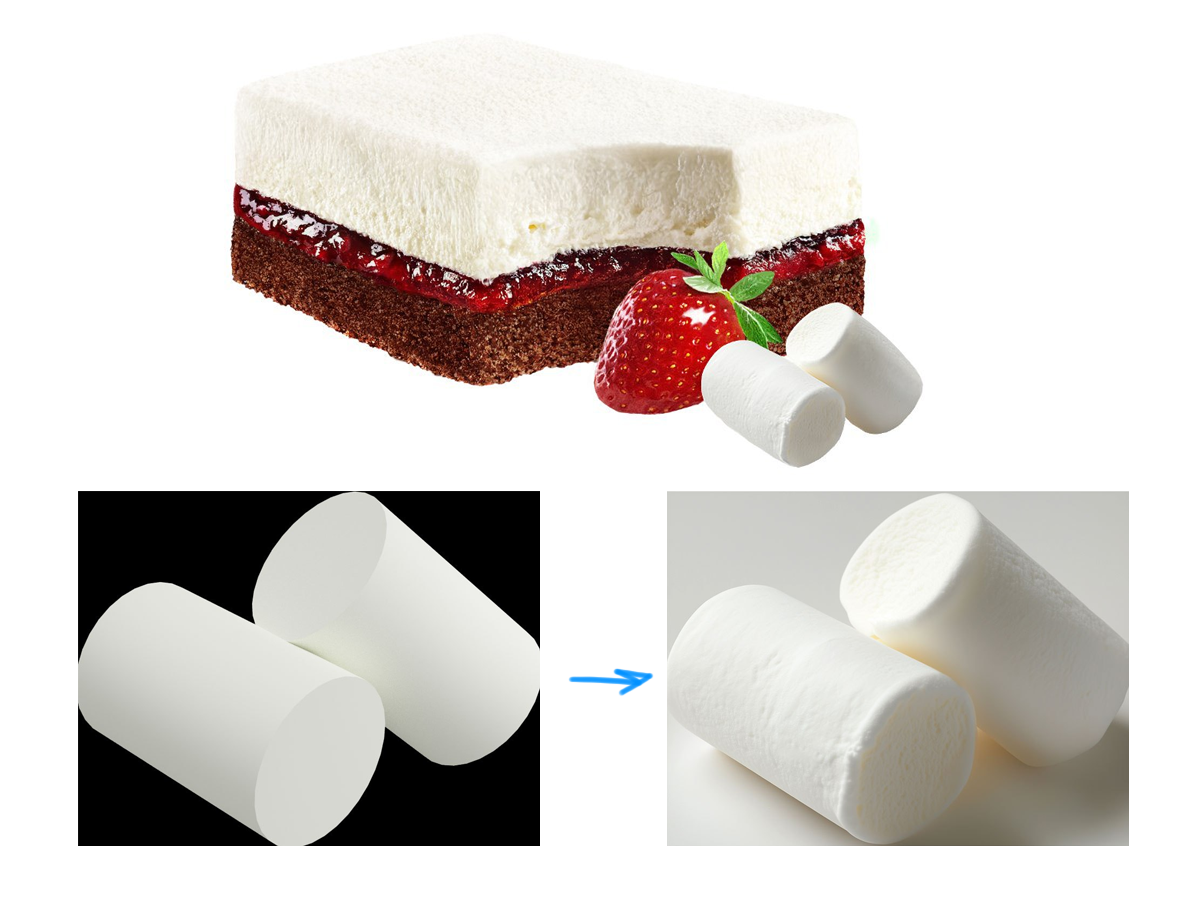

- Previously, drawing the texture of a marshmallow like this took two hours

- Now generation and refinement take 30 minutes

- All tasks can be solved with a mouse, without hand drawing

Some tasks started getting done many times faster. For example:

- Biscuit texture previously required hours of monotonous drawing

- Complex compositions had to be assembled from many photographs

- Drops and highlights were drawn by hand

- Light and shadow on objects were worked out layer by layer

Now all of this can be generated and then refined. But new challenges have appeared too...

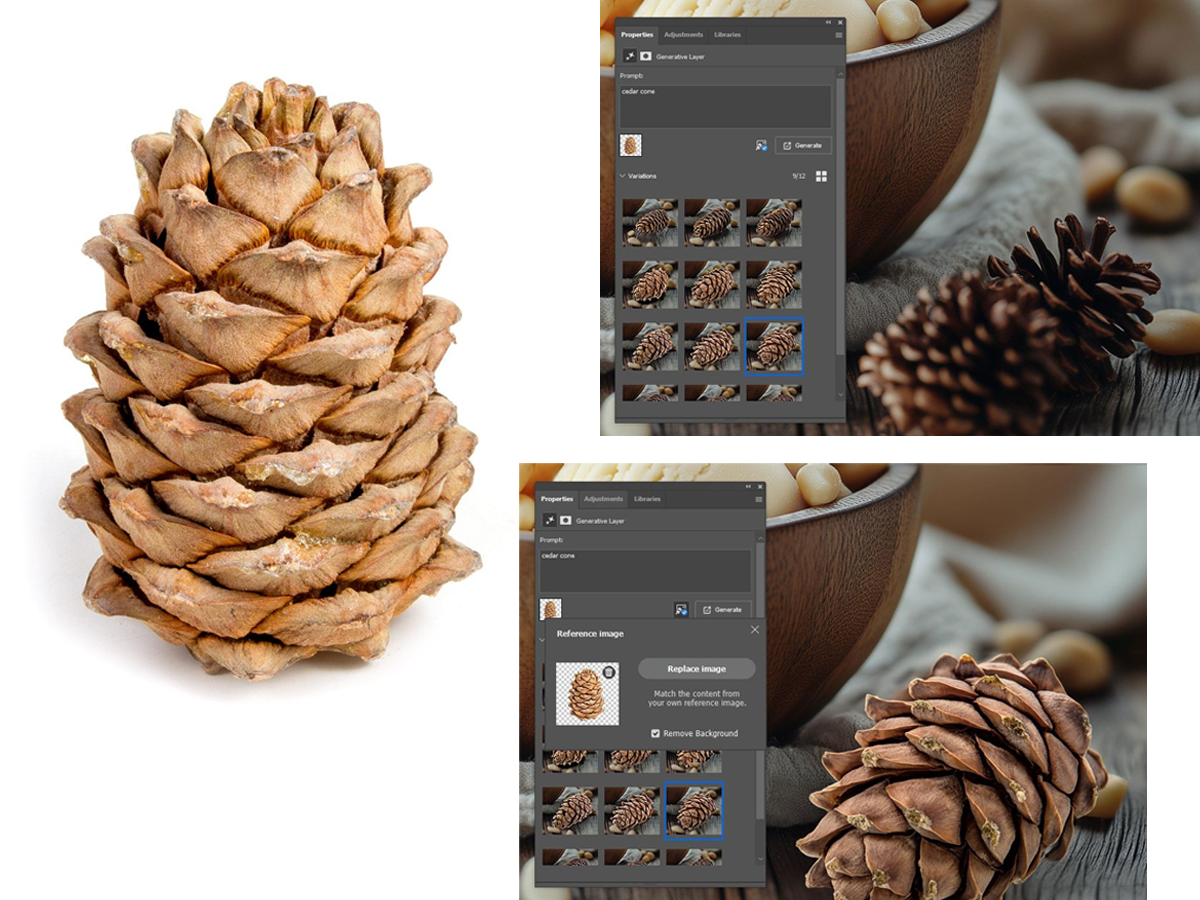

Where the Neural Network Lost to the Humans

If you need a specific item, in a specific position, it's agreed upon with the client and it's in the brief — it's far from certain that you can make this brief understandable to a neural network. I needed a pine nut cone, but the neural network kept offering spruce and pine cones — they're more popular. In such situations, it's pointless to try to break through the wall; you need to look for workarounds.

Here's what helped:

In Photoshop, we apply Generative Fill with a reference.

Now on top of it we generate a cone with the prompt "cedar cone" and a reference image: a canonical cedar cone from stock libraries.

This cone is positioned completely differently, but that's the magic — the neural network takes it and "rotates" it. Add shadows and the task is solved without a photoshoot.

This is done by Generative Fill (a.k.a. Firefly), built into Photoshop.

What else can't Midjourney do?

- A Turkish cezve (coffee pot) — the neural network doesn't understand its construction

- Pelmeni (dumplings) — it can't get the right shape that manufacturers value so much

- A half-peeled banana will never look beautiful

- A wine glass filled to the brim — impossible to achieve, no chance

- A regular three-leaf clover — it will most likely slip in a "lucky" four-leaf one

- A simple Soviet tea glass holder, an authentic Tula gingerbread, and other local national cuisine items (buckwheat porridge, dried ring-shaped crackers, meat jelly, sour milk, herring under a fur coat) — it will most likely fantasize something of its own.

Physics, optics, the laws of the material world — all of this is not yet 100% within the grasp of neural networks. LoRA (fine-tuning) in Flux handles these tasks. Midjourney is less controllable in this sense, but the images it produces are livelier and brighter.

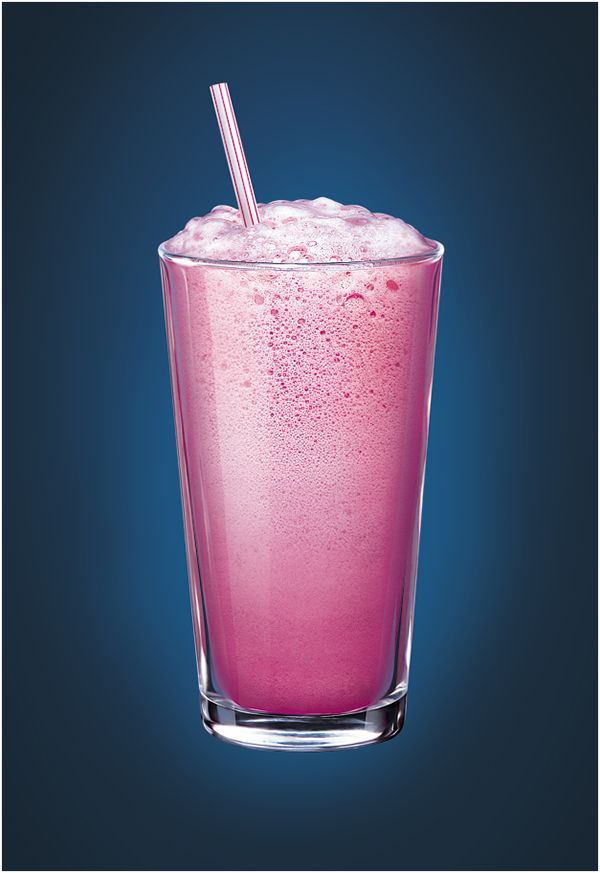

Where the Neural Network Beat the Humans

The neural network can capture the moment

A client wanted illustrations of their product against Vladivostok landscapes. I generated several beautiful images, refined them to final quality in Photoshop, and placed the packaging.

What's important here: notice how complex a photoshoot for these subjects would be. Ice cream is always hard to photograph, even in a studio. And in bright sunlight?

Nearly impossible.

And the second scene is a beautiful sunset, rapidly fading natural light — also a challenge.

In total, we created 12 scenes for different product flavors, in different consumption situations. Each scene presented its own challenges, and neural networks helped solve them all. Each time, the work started with test generations.

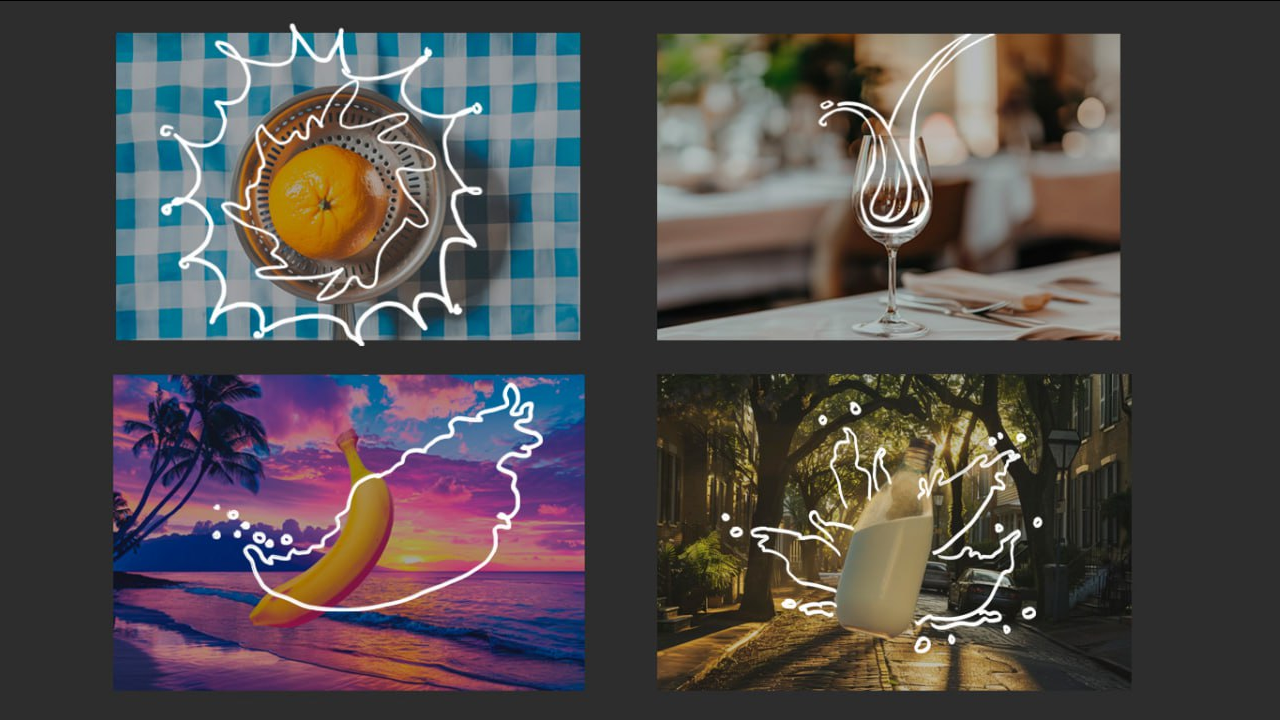

Another example of a complex subject — liquid splashes. Milk and yogurt are often needed on packaging. Water splashes are also very popular.

To get the right photo material for such a subject, a photographer needs a properly equipped studio, preferably with a "wet table." And even then, catching the right splash shape isn't easy. The budget inflates either from the photographer's fee or from paying hours to a retoucher who will then assemble an acceptable splash from individual frames. That was exactly the kind of work I did in the pre-neural-network era.

So when I managed to devise and test a new process for this task, it immediately went into production on several projects.

Here's the essence. Imagine the print layout is nearly ready, the object is there, and a splash is planned around it. While it exists only in the art director's imagination, they can sketch its contour, embodying all their ideas about harmony and composition. At that point, the task immediately becomes non-trivial, because just any splash won't fit here.

Let me show you how I do it with Midjourney and Photoshop:

The first AI we need is Adobe Firefly, the neural network integrated into Photoshop functioning as the Generative Fill tool:

- Outline the splash with a selection and enter a prompt. You can toggle the sketch layer on and off to see what works better on your particular image.

- It's advisable to vary the text, using different options. It will take several attempts before the splash turns out acceptable. Choose the best one.

Now let's move to Midjourney:

- The splash style can be changed. Take the image from the previous step + a splash with the desired shape. Choose an image paying attention to the fluidity of the liquid and other details. Upload both images to Midjourney.

- Generate until you have enough material. Use multiple references simultaneously. Use image prompt, as well as --sref, --iw, --sw, --s, --style raw.

The finished splash can be improved by adding a thin contrasting outline along the edge of the splash.

The Neural Network Won at Rapid Style Replication

Recently, Midjourney introduced the moodboards feature — you can assemble several images, let the neural network "cook" something unified from them, and start receiving images in that style.

Then I added successful generations obtained with --sref to the same dataset. And after that — generations from the board itself. The important thing is to balance everything so nothing dominates. Here's what you get in the end:

You might think the base model can already generate images in any style, so why this "add-on"? But no — if you want to differentiate yourself from the ubiquitous neural network mass-production, it's worth investing in fine-tuning. Midjourney is very receptive and allows you to achieve practically anything, especially with its latest powerful features like retexture and moodboards. Importantly, generations from moodboards even reduce the need for cherry-picking, which is always nice.

For comparison, look at generations from identical prompts, but in one case with the moodboard personalization code (left) and in the other — simply with the word "watercolor" in the prompt (right).

Recommendations for working with this feature:

- Stylize can be increased, slightly or all the way (300-1000) — this sometimes helps amplify the style's influence, but not always;

- On non-square formats, the style sometimes drops out and falls back to photography — you can try increasing --s or, as a last resort, outpaint from squares, which have far fewer misfires;

- The longer the prompt, the less stability, so it's better to prompt judiciously;

- At the beginning of the prompt, you can add a "hint" — one or two words to help the board remember its purpose. For example, if it's watercolor, you can start with "watercolor";

- "Chaos" (--c) works with moodboards and is useful, but "weird" (--w) doesn't;

- Moodboards can now be used in combination — this sometimes produces especially good results.

A Lyrical Digression on Prompting

Once upon a time, in the distant year of 2023, when young prompt engineers had just mastered this extraordinary art of assembling tokens into prompts, the future seemed cloudless and long. But even then voices were raised about this profession being allotted a short lifespan, and soon the star of prompting would set.

The most caustic skeptics compared it to elevator operators — boys who once pressed buttons in elevators until passengers learned to press those buttons themselves.

And indeed, something similar can be observed. Additional tools have appeared and developed that make the prompt itself a less crucial part of the generation process. However, for now it's still an integral one.

So it's worth remembering prompting etiquette. There's an offensive nickname "boomer prompts" — that's the contemptuous term for bloated, overloaded-with-junk prompts. Avoid that. A prompt should be lean and tight, so that every word in it has weight and produces a clear effect on the generation.

The Neural Network Won at Speed for Repetitive Tasks

Beyond standalone neural networks, numerous platforms have emerged and are developing where you can chain them together. Such constructs perform a narrow but complex task that none of the participating neural networks could handle on its own.

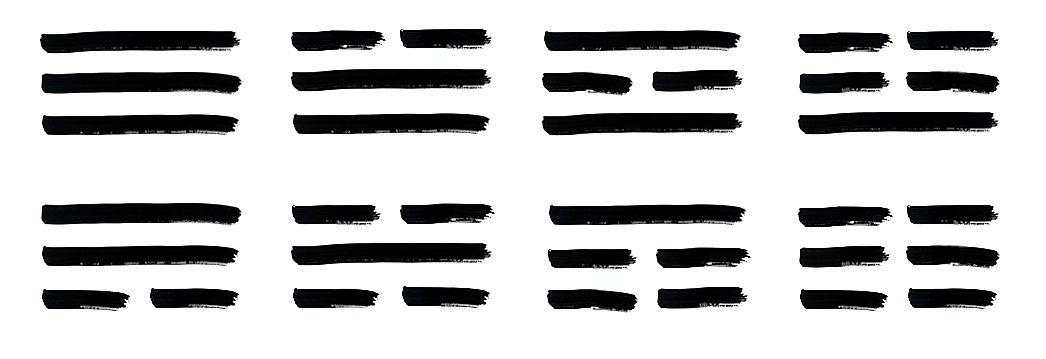

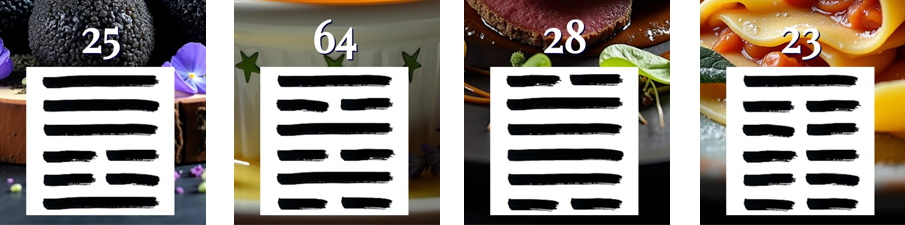

For example, there's the well-known site with "glyphs." I experimented on it while it was free and made a couple of fairly whimsical glyphs for educational purposes and for the love of art. I believe it's very useful to spend time on such things.

It suddenly occurred to me that it would be great to make neural networks match food to hexagrams from the Chinese Book of Changes. Here's how my glyph works:

1. First, a mysterious hexagram symbol consisting of lines appears randomly. There was a technical difficulty here: I wanted the symbols to look like real ink calligraphy, with brush stroke irregularities. Creating 64 different images seemed impractical, so I made eight base trigram elements, simply drew them as digital illustrations and uploaded JPEG files. I planned to assemble symbols from two halves, but neural networks confused top and bottom in one out of ten cases. Only when I uploaded the complete list of hexagrams to the glyph with exact structural specifications did the program work correctly.

2. Next, GPT-4 determines the hexagram number from the drawn symbol based on the list.

3. Claude 3.5 Sonnet forms an interpretation of the hexagram, drawing on ancient knowledge and Chinese texts.

For example, for hexagram #60 "Limitation," it suggested: "Just as water finds natural boundaries in a vessel, this moment requires conscious moderation and clear boundaries to maintain harmony and prevent the disruption of natural order through excess."

4. The next Claude creates a prompt for food image generation, inspired by the obtained interpretation. It invents a dish that should reflect the meaning of the hexagram.

5. For the final image generation, I use Flux dev — it balances between realism and symbolism better than others.

6. The finishing touch: a wisdom aphorism at the top of the image, around the same hexagram, again by Claude.

The result is a composition of the calligraphic hexagram symbol, its number and name, plus a dish illustration and a philosophical phrase for meditation.

The New Economics of Illustration

The change in process required rethinking the approach to pricing. I've developed two models:

When working in the first mode, where neural networks only assist:

- Standard retouching goes at the regular hourly rate.

- Illustrations are sold at a price tied to size; the client receives the result, not the process. It's become slightly more attractive for clients because the work gets done faster.

The second mode, where the main work is done by the neural network, required a new approach:

- First I take time for "pre-prompting" — testing whether the task is even feasible; this is free

- The creative phase of finding a "working prompt" is charged by time and is expensive

- Finished images are priced per piece and are cheap

- Final refinement usually comes as a bonus, or we switch to regular hourly billing

A project might end with simply handing over the prompt to the client so they can generate on their own. But in practice this almost never happens — I'm the one who finishes the work. The more effective the first phase was, the less time goes into selecting successful variants.

There's another important aspect: now I can't immediately guarantee the client that their task will definitely be completed. This fundamentally changes the psychology of work that I was accustomed to over many years. You have to communicate more, explain the technology, consult. But this is also part of the new reality.

Illustration as an International Business

I started working with foreign clients about ten years ago. We assembled a database of contacts for design studios and agencies around the world, starting from competition winners. My husband took on a lot of this task. It's hard for me to come up with creative ideas for outreach, to attract new clients, but a freelancer can't survive without it. And even if you're successful in current projects, if you don't maintain contact with existing clients, don't build a workflow around their database, don't inform them about new services, they may forget about you. At that time my husband was a huge help at the start. Since then he periodically takes on these frustrating tasks, and this help is invaluable.

We focused on studios involved in packaging and food illustration. We sent out a mailing to approximately a thousand addresses — this brought first orders from nearly every continent.

Over the years of work, an interesting picture of national characteristics emerged, many coinciding with stereotypes, as amusing as it was to discover:

America and Australia

- Americans work fast, with specific questions and positivity

- The timing is such that you can't do anything else in parallel

- They don't haggle, except for formality

- Australians are similar, only they love emojis and might forget things

Europe

- Spaniards are warm-hearted but tight on budgets

- Germans are difficult due to over-meticulousness

- The French aren't willing to communicate in English

- Baltic country residents are articulate, friendly, and never in a hurry

Asia

- The Japanese don't make contact at all

- The Chinese never tell you about the whole project

- They may not introduce themselves by full name — using the studio name instead of a surname

- Communication feels like a call center: you're a cog, and they're cogs

Currently I have two main directions: working with Western clients and with Russian clients. There's a clear divide between them, primarily in the nature of communication. It affects everything so much that I even specifically adapted my promotional websites and significantly changed the tone of voice. At one time I was targeting Western clients — simply because they were willing to pay more; now the prices have equalized.

Client Problems

Throughout my entire time working with foreign clients, I've had only a few cases of non-payment. The most unusual was farmers from New Zealand who grew plums. They liked my work, sent a prepayment, and ordered a similar illustration. I made it, but they never sent the second payment. Yet they continued to correspond and asked me to understand their situation — the plum harvest had failed, and God would surely help me. You can't exactly sue someone in New Zealand, so I had to let them go save their plums. They promised to pay the $200 when things improved.

There was also a case with an Arab entrepreneur. The person looked serious: he talked about his ice cream startup, had video calls standing in a suit against the backdrop of Dubai skyscrapers. The story was the same: paid the prepayment, received the work, and "forgot" to pay the remaining $400. Unlike the Christian farmers, he simply went silent.

I gathered information, all the project data, and consulted a lawyer about the possibility of suing. The lawyer advised against it — too complicated for such a claim amount.

There were a couple more unpleasant moments, various process bumps, but overall my experience doesn't confirm many fears about how often clients cheat. Of course, you need to stay alert, never agree to work without prepayment, without a contract — this will preserve, above all, the healthy nerves so necessary for an artist. But you shouldn't be afraid of every shadow either; people, as a rule, order pictures not to deceive.

Why Not Stock Photography?

Working on stock sites always seemed like an attractive alternative, and I even made a couple of attempts to enter them. Neural networks added efficiency to the process, so thoughts immediately arose — what if I try breaking in again? Of course, I wasn't the only one. And therein lies the problem. The situation on all stock platforms is the same now: everything has been flooded by an avalanche of AI-generated content. Rising through this mass is virtually impossible for a newcomer. Even veteran stock contributors, with a solid multi-year portfolio, feel the pressure of this storm.

Platforms are trying to buy content from stock contributors for training their AI! The rarest and most labor-intensive items are exactly what platforms extract from contributors' portfolios for this training, leaving only what is uncompetitive and has poor sales prospects. For example, if a photographer brings photos of people in motion to the platform, the stock site will likely take that work for training, while allowing static models for sale. This makes sense, as neural networks need to fill their gaps! But toward the contributors — it looks like robbery... They're paid pennies for this training content.

At the same time, platforms often have very strict copyright requirements. Nothing belonging to others is allowed, not even an "idea," whatever that means. And so, for example, my codes, prompts — they're afraid to accept them. Documentation or a "release" is needed for everything. Literally, for example, confirming videos of someone sitting and painting a picture with a brush.

Copyright

Fifteen years ago, I made an illustration of a cup with an oxygen cocktail. It turned out so successful that it gradually became almost the standard for this product. I'd encounter my cup in the capital, in the provinces, and even in neighboring countries. It winked at me from signs and banners. Image search results are stuffed with it too.

What does an author feel when they suddenly encounter their work somewhere they didn't place it? For me, it triggers three reactions:

- Joy — the quickest reaction, pure endorphin. It's ordinary vanity, but positive and naive. It's nice that people like your art.

- Awkwardness — when you realize the image was stolen. It's hard to believe people didn't know it was wrong to do that.

- Condescension — in the end, you realize it's a trifle in our sinful world. Let he who has never painted over a watermark cast the first stone!

Now that neural networks have appeared, the topic of copyright has become even more acute (it's important not to confuse it with commercial rights). But the deeper I dive into it, the clearer I understand: copyright is a legal bubble that is bound to burst someday. Perhaps neural networks have arrived precisely for this purpose.

By its nature, copyright is not a right that should be legally defended, but a category of conscience. An artist, by publishing their work, already gives it away, like Prometheus giving fire. If you're afraid — don't give it away. If you've given it away — don't be afraid!

If instead of dreary clones of my oxygen cocktail cup I saw someone creatively transform it, I would only be happy. It doesn't matter whether it was done by a neural network or anything else — what matters is movement, development, life.

Now there are more and more opportunities to test these beliefs in practice.

I often find myself in the opposite role — the one who must decide where the line is between theft and ethical borrowing. I've noticed that the voice of conscience truly determines a lot. For example, it became obvious that even the thought, let alone the attempt, to "copy" a specific artist's style with a neural network immediately causes discomfort. Conscience protests! But if you take a more cultured path in every sense — gather references from different sources, different authors, train the neural network on this mass, adding along the way some influence of your own soul — then it's a completely different matter. Such a process captivates and inspires.