I Finally Understood How Transparency Can Backfire — And an Incident Report

A hosting provider shares a detailed post-mortem of a data center power incident that took four servers offline, and reflects on why public transparency about infrastructure failures creates a paradoxical disadvantage compared to competitors who stay silent.

We at RUVDS have always been transparent about our infrastructure problems. Every outage, every incident, every hiccup — we write about it publicly. On Habr, on Telegram, in support responses. This article is about a specific incident in our Korolev data center, but it's also about a larger question: does this transparency actually hurt us?

The Incident

Thursday (previous week): Both independent power substations feeding our Korolev facility failed simultaneously. This is supposed to be impossible — the whole point of dual substations is redundancy. The outage lasted approximately 4 hours. Our UPS systems and diesel generators kept everything running, but the battery reserves took a significant hit.

Monday: Power loss recurred. This time, two UPS units failed to hold their loads, and four servers went offline.

Four servers out of the hundreds in that facility. But four servers is four clients whose services went down, and that's four too many.

Root Cause: A Supply Chain Problem

The failure chain started with something mundane: battery replacement scheduling.

We had planned routine UPS battery replacement for early December. On December 2nd, we paid for the new batteries, expecting delivery between December 3rd and 5th. The supplier received the shipment — but the boxes were physically damaged in transit. They rightfully refused to accept them and initiated a return.

This left us operating for a week beyond the planned battery maintenance window. The existing batteries were within their rated lifetime but at the outer edge of reliable performance. Under normal conditions — a single power event with a clean switchover to generators — they would have been fine.

But conditions weren't normal.

The Cascading Failure

Here's what happens during repeated power events:

- First blackout: Grid power fails. UPS batteries engage immediately, holding the load while diesel generators start up (30-60 second delay). Batteries partially discharge.

- Generator running: Generators take over. Batteries begin recharging — but this takes hours for a full charge.

- Grid restored: Power switches back from generators to grid. Brief transfer interruption, handled by batteries. More discharge.

- Second blackout (Monday): Grid fails again. Batteries engage — but they haven't fully recharged from Thursday's event. Some units can't sustain the load long enough for generator startup.

Each power fluctuation leaves the batteries more depleted. The diagnostic systems showed normal readings — temperature was maintained at 18-19°C, voltage readings appeared correct, built-in UPS indicators showed no warnings. But under actual discharge stress, the aged batteries simply couldn't deliver their rated capacity.

Infrastructure Design: Why Only Four Servers Failed

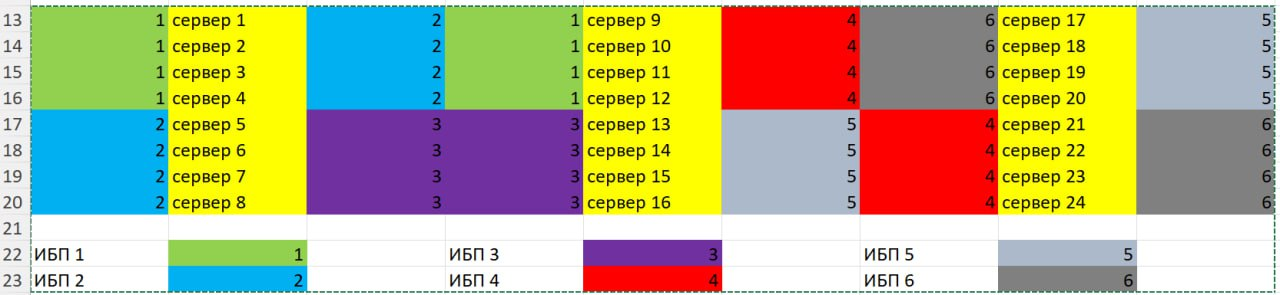

Our data centers use a redundancy pattern worth explaining. Every server has dual power supplies, each connected to a different UPS unit. The UPS units themselves are fed by separate power paths. We arrange servers in a "checkerboard" connection pattern — alternating which UPS pairs feed adjacent server slots.

This means that when two specific UPS units fail, they don't take out a contiguous block of servers. Instead, only servers that happened to have both their power supplies connected to the two failed UPS units go down. With the checkerboard pattern, that was four servers instead of the eight it would have been with sequential assignment.

Redundancy works — just not perfectly.

The Response

Our team immediately sourced replacement batteries from retail suppliers — not our usual bulk vendor, but whatever was available fastest. All planned battery replacements were completed the same day. Affected customers received SLA compensation.

We also reviewed our battery monitoring procedures. The key lesson: diagnostic readings (temperature, voltage, indicator LEDs) are necessary but not sufficient indicators of battery health. Under normal float conditions, a degraded battery looks identical to a healthy one. The failure only manifests under load — which is exactly when you need it most.

The Transparency Paradox

And now the part that prompted the title of this article.

Every time we publish an incident report like this, we see the same pattern in comments: "I've been with Provider X for three years and never had a single outage. You guys seem to have problems constantly."

Here's what I've come to understand: if we publish every incident and our competitors publish none, we will always look worse — even if our actual uptime is better. That provider you've been with for three years? They've almost certainly had outages. They just didn't tell you about them. Maybe they called it "scheduled maintenance." Maybe they quietly compensated affected users and moved on. Maybe the outage affected a small enough group that it never became public.

We talk about everything — on Habr, on Telegram channels, in support responses. We write detailed post-mortems. We explain root causes. And each time, it creates a data point that can be used against us in comparison with providers who maintain strategic silence.

This transparency has a real cost. Potential customers Google our company name, find incident reports, and choose a competitor whose Google results are clean — not because they're more reliable, but because they're more quiet.

So Why Keep Doing It?

Because the alternative is worse. Silence creates an information asymmetry that benefits providers at the expense of customers. If everyone hid their failures, customers would have no way to evaluate infrastructure reliability at all. We'd rather be the company that shows its work — imperfections included — than the one that maintains a facade.

And honestly, these post-mortems make us better. Writing up a failure in public detail forces a level of analysis and accountability that internal reviews alone don't achieve. We have to explain not just what happened, but why, and what we're doing to prevent it. That's uncomfortable. It's also effective.

Four servers went down. We fixed it same-day, compensated affected clients, and now we're telling you exactly what happened and why. That's the deal with transparency: it costs you reputation points in the short term, but it builds something more durable in the long term.

We're not going to stop.