Enhance It! Modern Resolution Upscaling

The gap between Hollywood's portrayal of image enhancement and real-world Super Resolution capabilities. Why police keep asking to 'enhance' surveillance footage, what science can actually do, and how marketing distorts the picture.

Enhance It! What, You Can't Be Bothered to Press the Right Button?

I've long stopped flinching and being surprised when the phone rings and a stern, confident voice says: "This is Captain So-and-so (Major So-and-so), can you answer a couple of questions?" Why not chat with our friendly police...

The questions are always the same. "We have video of a suspect, please help us restore the face"... "Help us enlarge a license plate from a dashcam"... "You can't see the person's hands here, please help enlarge"... And so on in the same vein.

To give you an idea of what we're talking about — here's a real example of heavily compressed video that was sent to us, where they ask to restore a blurry face (whose size is equivalent to roughly 8 pixels):

And it's not just Russian police officers bothering us — Western Pinkertons write too. Here, for example, is a letter from British police:

I have used your filters privately for some time to rescue my poor videos of family holidays but I would like to use the commercial filters for my work. I am currently a Police Officer in a small police force and we are getting a lot of CCTV video, which sometime is very poor quality and I can see how your filters would make a real difference. Can you tell me the cost and if I could use them.

Thank you

Or here's a police officer from Australia writing:

Note that the letter is very thoughtful — the person is concerned about whether the algorithm has been published and about accountability for incorrect restoration.

Sometimes they only admit during correspondence that they're from the police. Here, for example, the Italian Carabinieri would like to get help:

Dr. Vatolin

Thanks for the answer.

The answer is worth also for the police forces (Carabinieri investigation scientific for PARMA ITALY)?

To which software they have been associates your algorithms to you.

We would be a lot.

And of course, there are many inquiries from ordinary people...

It's clear that this entire stream of requests doesn't appear out of thin air.

Movies and TV series are primarily "to blame."

For example, here they enlarge a compressed video frame by 50x in 3 seconds and from a reflection in glasses they see evidence...

And there are many such moments in modern films and series. For example, this compilation video has epically collected such episodes from a stack of TV series — don't begrudge the two minutes to watch it...

And when you see something like this in every movie, it becomes obvious to even the last hedgehog that all you need is a competent computer genius, a combination of modern algorithms, and you just need to command "STOP!" and "Enhance it!" at the right moment. And voilà! A miracle will happen!

However, screenwriters don't stop at this already worn-out trope, and their unbridled imagination goes further. Here's a truly monstrous example. Brave detectives obtained a photo of the criminal from a reflection in the PUPIL of the victim. Indeed, reflections in glasses had already been done. That's banal. Let's go further! The surveillance camera in the hallway just happened to have the resolution of the Hubble telescope:

In "Next" (00:38:07):

In "Avatar" (1:41:04–1:41:05) the sharpening algorithm is, by the way, somewhat unusual compared to other movies: it first sharpens in specific places, and then a fraction of a second later catches up with the rest of the image — first the left half of the mouth, then the right:

In short, in extremely popular movies watched by hundreds of millions, image sharpening is done with a single click. Everyone (in movies) does it! So why can't you, such clever specialists, do it???

— I know this is easy! And I was told precisely that you work on this! Are you too lazy to press that button?

// Oh god… Damned screenwriters with their wild imaginations…

— I understand you're busy, but this is about your assistance to the state in solving a serious crime!

// We understand.

— Maybe it's about money? How much do we need to pay you?

// Well, how do you briefly explain that it's not that we don't need money… And then again, and again…

Any resemblance of the quotes above to actual conversations is purely coincidental, but this text is written, in particular, so that one can send a person to carefully read it first, and only then call back.

Conclusion: Because the scene of enhancing surveillance camera footage with one click has become a cliché of modern cinema, an enormous number of people sincerely believe that enlarging a fragment from a cheap camera or dashcam is very easy. The main thing is to ask properly (or command, depending on your luck).

Where It All Comes From

It's clear that this entire stream of inquiries doesn't come from nowhere. We have indeed been working on video improvement for about 20 years, including various types of video restoration (and there are several types, by the way), and below in this section you'll see our examples.

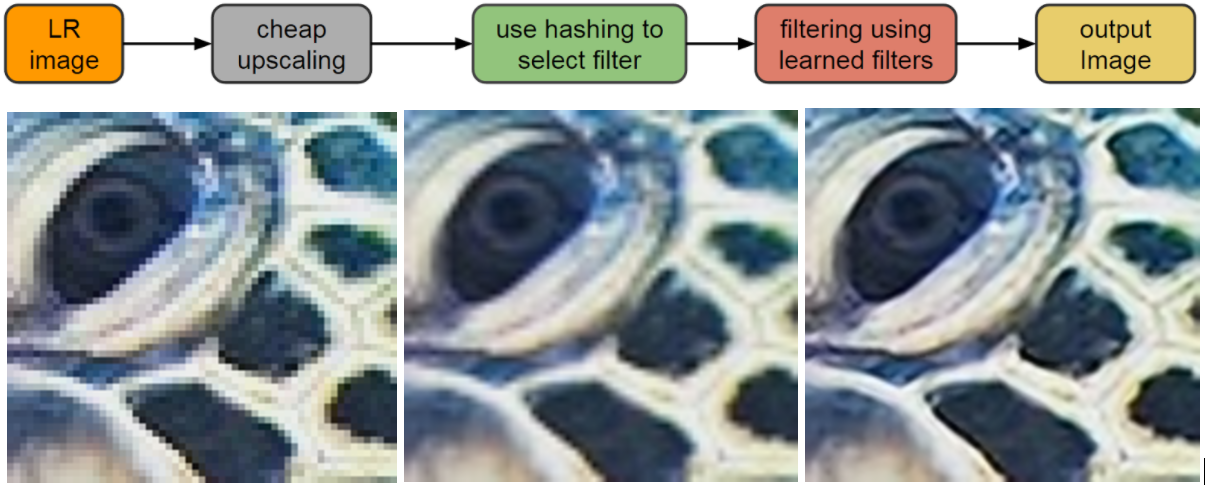

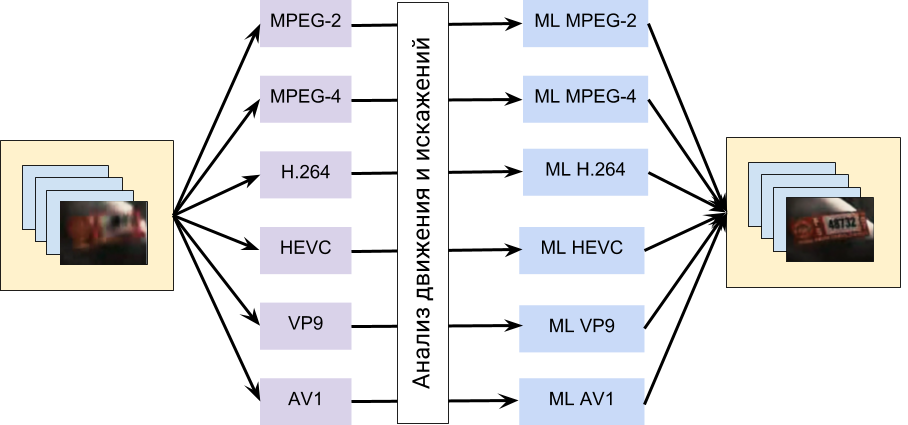

"Smart" resolution upscaling in scientific papers is usually called Super Resolution (abbreviated SR). Google Scholar finds 2.9 million papers for the query Super Resolution, meaning the topic has been quite thoroughly explored, and an enormous number of people have worked on it. If you follow the link, there's an ocean of results, each one prettier than the last. However, digging deeper, the picture, as usual, becomes less idyllic. In the SR field, two directions are distinguished:

- Video Super Resolution (0.4 million papers) — actual restoration using previous (and sometimes subsequent) frames,

- Image Super Resolution (2.2 million papers) — "smart" resolution upscaling using only a single frame. Since in the case of a single image there's nowhere to get information about what was actually in that spot, algorithms in one way or another fill in (or, loosely speaking, "imagine") the picture — what could have been there. The main criterion here is that the result should look as natural as possible or be as close to the original as possible. And it's clear that for restoring what was "actually" there, such methods are not suitable, although enlarging an image to make it look better — for example, for printing (when you have a unique photo but no higher-resolution version) — is very much possible with such methods.

As you can see, 0.4 million versus 2.2 — that is, 5 times fewer people are actually working on restoration. The topic of "make it bigger, just prettier" is quite in demand, including in industry (the notorious digital zoom of smartphones and digital point-and-shoot cameras). Moreover, if you dive even deeper, it quickly turns out that a noticeable number of Video Super Resolution papers are also about upscaling video resolution without restoration, because restoration is hard. In the end, we can say that those who "make it pretty" outnumber those who actually try to restore by about 10 to 1. A fairly common situation in life, by the way.

Going even deeper. Very often the algorithm's results are very good, but it needs, for example, 20 frames forward and 20 frames backward, and the processing speed for one frame is about 15 minutes using the most advanced GPU. That is, for 1 minute of video you need 450 hours (almost 19 days). Oops... You'll agree, this is nothing like the instant "Zoom it!" from movies. One regularly encounters algorithms that take several days per frame. For papers, a higher-quality result is usually more important than processing time, since acceleration is a separate complex task, and it's easier to eat an elephant one piece at a time. This is how the difference between life and cinema looks...

The demand for algorithms that work on video at a reasonable speed has spawned a separate direction — Fast Video Super Resolution — 0.18 million papers, including "slow" papers that compare with "fast" ones, meaning the actual number of papers about such methods is inflated. Note that among "fast" approaches, the percentage of speculative ones — i.e., without genuine restoration — is higher. Correspondingly, the percentage of honestly restoring ones is lower.

The picture, you'll agree, is becoming clearer. But that's certainly far from everything.

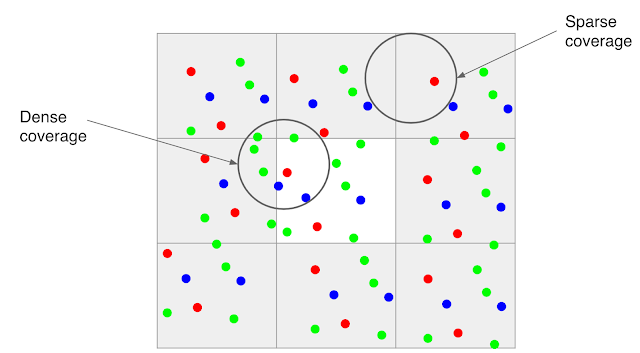

What other factors significantly affect getting good results?

First, noise has a very strong impact. Below is an example of 2x resolution restoration on very noisy video:

Source: author's materials

The main problem in this fragment is not even ordinary noise, but color moiré on the shirt, which is difficult to process. Some might say that large amounts of noise aren't a problem today. That's not the case. Look at data from dashcams and surveillance cameras at night (precisely when they're needed most).

However, moiré can also appear on relatively "clean" video in terms of noise, like the city shown below (the examples below are based on this work of ours):

Source: author's materials

Second, for optimal restoration, you need near-perfect prediction of motion between frames. Why this is difficult is a separate large topic, but it explains why scenes with panoramic camera movement are often restored very well, while scenes with relatively chaotic movement are extremely difficult to restore — however, even with those, you can get quite decent results in some situations:

Source: author's materials

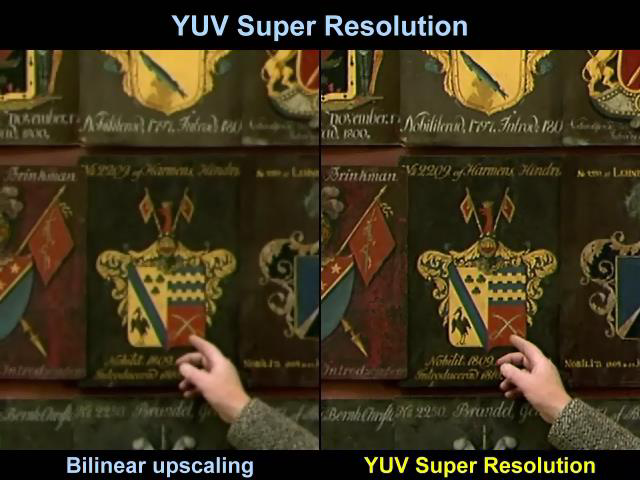

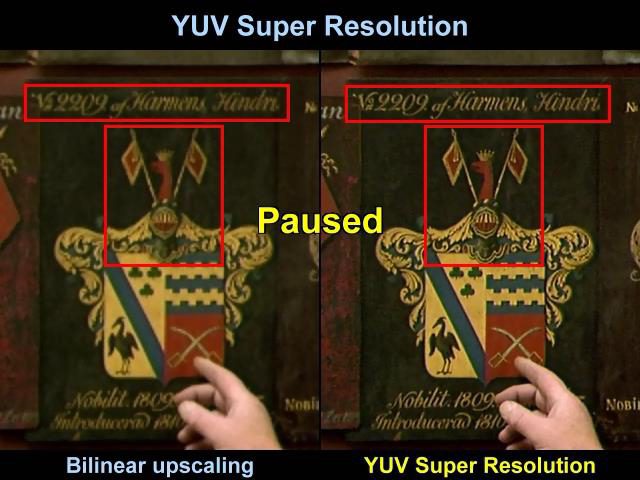

And finally, let's give an example of text restoration:

Source: author's materials

Here the background moves smoothly enough, and the algorithm has the opportunity to "go wild":

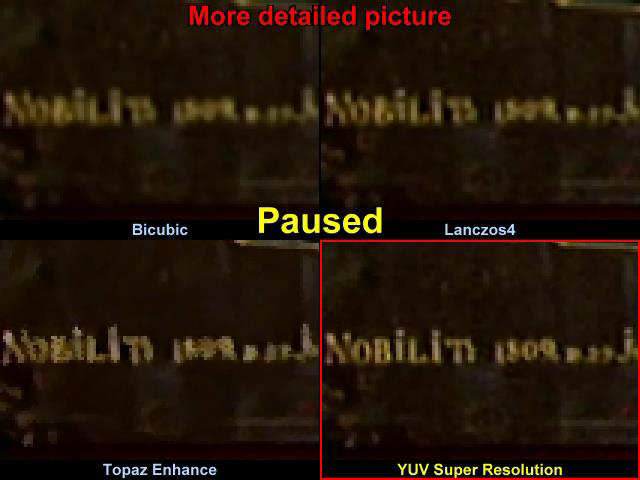

In particular, if you compare the very small text to the right of the hand, including with classical bicubic interpolation enlargement, the difference is extremely clear:

You can see that with bicubic interpolation, reading the year is practically impossible. With Lanczos4, which is favored by semi-professional video resizers for its greater sharpness, the edges are sharper, of course, but reading the year is still impossible. We won't comment on the commercial Topaz, but with our method the text reads clearly and you can see that it's most likely the year 1809.

Conclusions:

- Thousands of researchers worldwide work on resolution upscaling, and millions of papers have been published on the topic. Thanks to this, every smartphone has "digital zoom" that is usually objectively better than the enlargement algorithms of regular software, and every FullHD TV can display SD video, often even without characteristic resolution-change artifacts.

- Genuine image restoration from video is done by significantly fewer than 10% of those working on Super Resolution. Moreover, most restoration algorithms are extremely slow (up to several days of computation per frame).

- In most cases, restoration assumes that high frequencies in the video are more or less preserved, and therefore doesn't work on video with significant compression artifacts. And since surveillance camera settings often choose the compression level based on the desire to save more hours (i.e., the video is compressed more, and high frequencies are "killed"), restoring such video becomes practically impossible.

Honest Industrial Multi-Frame Super Resolution

However, not even two years passed before a continuation of the work was published, used in Google Pixel 3 and radically improving the quality of its photography. This is already honest multi-frame Super Resolution — an algorithm for restoring resolution from multiple frames.

The image above shows a comparison of results from Pixel 2 and Pixel 3, and the results look very good — the image has indeed become much sharper, and it's clearly visible that this is not "hallucination" but genuine detail restoration.

Let's briefly analyze the algorithm. The colleagues started from changing the Bayer pattern interpolation.

Google's colleagues use the fact that the vast majority of smartphone photos are taken handheld — meaning the camera will shake slightly. When there's optical image stabilization (OIS), the camera sensor shifts to compensate for hand movement. But this compensation is not perfect — there remains a residual sub-pixel shift. It is precisely this small sub-pixel shift between frames that provides additional information that the algorithm uses to reconstruct real detail, simultaneously reducing noise.

For cases where the shift between frames is too small (for example, on a tripod), the OIS intentionally adds a small displacement — the so-called "hand shake" is synthetically generated to ensure diversity of frames.

Google's results in restoring real detail are impressive. Unlike single-frame approaches, this method doesn't invent details — it recovers them from actual data spread across multiple frames.

Image Super Resolution in Industry: Yandex DeepHD Example

On the technology description page from Yandex (marketing name DeepHD), links point only to Image Super Resolution. This means that counterexamples where the algorithm damages the image inevitably exist, and they occur more frequently than for "honest" restoration algorithms.

Yandex's advertisement states that DeepHD technology works in real-time, so today you can already watch television channels using it.

The claim of "real-time" processing deserves skepticism. Processing high-resolution content in real-time typically requires "disabling many critical quality-affecting features." The black-and-white examples shown suggest multiple algorithms may be working simultaneously, not just single-frame processing.

Conclusions and Recommendations

One must understand that marketers frequently employ their tricks precisely because it works. There is a well-known advertising principle: show only the best examples, and hide the worst. Any Super Resolution algorithm (and not only SR) has cases where it works brilliantly and cases where it fails miserably. Marketing departments show only the former.

Be extremely skeptical when a company says: "Our technology makes everything better." The right question is always: "Show me the cases where it fails."

I wish everyone to be less swayed by advertising, especially when the storytelling is top-notch and you'll really want to believe in a miracle!

FAQ

What is this article about in one sentence?

This article explains the core idea in practical terms and focuses on what you can apply in real work.

Who is this article for?

It is written for engineers, technical leaders, and curious readers who want a clear, implementation-focused explanation.

What should I read next?

Use the related articles below to continue with closely connected topics and concrete examples.